# Install and load the rvest package

install.packages("rvest")

library(rvest)Text Data

4.1 Overview

In educational research, analyzing text data is typically considered the work of qualitative researchers. Traditionally, qualitative research involves identifying patterns in non-numeric data, and this pattern recognition is done manually (Creswell & Poth, 2018). This process is time-intensive but can yield rich results — for example, through human coding of interview transcripts, policy documents, or classroom artifacts (Saldaña, 2021).

With the advent of new software, we can capture and analyze text data in ways that were previously not possible, such as web scraping, accessing social media APIs, or downloading large online documents. Given the increased scale and variety of available text data, analysis now benefits from computational approaches — including dictionary-based, frequency-based, and machine learning methods — that go beyond manual coding (Grimmer et al., 2022; Silge & Robinson, 2017). These methods allow educational researchers to ask new types of research questions, expanding the scope and depth of possible insights. For example, Rosenberg et al. (2021) used computational text analysis to examine public sentiment about science education policy across thousands of social media posts — a scale of analysis not feasible through traditional qualitative methods.

4.2 Accessing Text Data

Text can be found and collected in many different ways. For example, social media can serve as a rich source of text data (e.g., Reddit posts), and text created in classrooms—especially online (e.g., student discussion posts)—can also be analyzed. In this section, we will cover a few basic ways of accessing text data. Please note that although we cover some prominent ways, this is by no means an exhaustive list. Therefore, please refer to the additional resources section at the end of the chapter to dive deeper.

4.2.1 Web Scraping (Unstructured or API)

What is Web Scraping?

Web scraping refers to the automated process of extracting data from web pages. It is particularly useful when dealing with extensive lists of websites that would be tedious to mine manually. A typical web scraping program follows these steps:

- Loads a webpage.

- Downloads the HTML or XML structure.

- Identifies the desired data.

- Converts the data into a format suitable for analysis, such as a data frame.

In addition to text, web scraping can also be used to download other content types, such as audio-visual files.

Is Web Scraping Legal?

Web scraping is not governed by a single universal rule, so researchers should separate platform policy questions from legal and ethical risk assessment (Salganik, 2019). In practice, Terms of Service define platform-level permissions (for example, whether automated collection is allowed), while robots.txt provides crawler guidance; neither should be treated as a complete legal determination. Legal exposure depends on jurisdiction and context (for example, public vs. access-restricted content, personal data handling, and whether technical barriers are bypassed). For educational research, a practical standard is to document collection decisions, minimize personally identifiable data, and align scraping procedures with institutional review and data-governance requirements (Salganik, 2019).

Reading a Web Page into R

Once permissions are confirmed, the first step in web scraping is to download the webpage’s source code into R. In R, a great package to do this is rvest.

To demonstrate, we will scrape a simple Wikipedia page. Static pages, which lack interactive elements like JavaScript, are simpler to scrape. You can view the page’s HTML source in your browser by selecting Developer Tools > View Source.

# Load the webpage

wikipedia_page <- read_html("https://en.wikipedia.org")

# Verify that the webpage loaded successfully

wikipedia_pageParsing HTML

The next challenge is extracting specific information from the HTML structure. HTML files have a “tree-like” format, allowing us to target particular sections. Use your browser’s “Developer Tools” to inspect elements and locate the data. Right-click the desired element and select Inspect to view its structure.

To isolate data sections within the HTML structure, identify the XPath or CSS selectors. For instance:

# Extract specific section using XPath

section_of_wikipedia <- html_node(wikipedia_page, xpath='//*[@id="mw-content-text"]/div/table')

head(section_of_wikipedia)To convert the extracted section into a data frame, use html_table():

# Convert the extracted data into a table

health_rankings <- html_table(section_of_wikipedia)

head(health_rankings[ , (1:2)]) # Display the first two columnsParsing with CSS Selectors

For more complex web pages, CSS selectors can be an alternative to XPath. Tools like Selector Gadget can help identify the required CSS selectors.

For example, to scrape event information from Duke University’s main page:

# Load the webpage

duke_page <- read_html("https://www.duke.edu")

# Extract event information using CSS selector

duke_events <- html_nodes(duke_page, css="li:nth-child(1) .epsilon")

html_text(duke_events)Scraping with Selenium

For tasks involving interactive actions (e.g., filling search fields), use RSelenium, which enables automated browser operations.

To set up Selenium, install the Java SE Development Kit and Docker. Then, start Selenium in R:

# Install and load RSelenium

install.packages("RSelenium")

library(RSelenium)

# Start a Selenium session

rD <- rsDriver()

remDr <- rD$client

remDr$navigate("https://www.duke.edu")To automate data entry, identify the CSS selector for the search box and input the query:

# Find the search box element and enter a query

search_box <- remDr$findElement(using = 'css selector', 'fieldset input')

search_box$sendKeysToElement(list("data science", "\uE007")) # "\uE007" represents Enter keyWeb Scraping within a Loop

To scrape multiple pages, embed the code within a loop to automate tasks across different URLs. Since each site may have a unique structure, generalized scraping can be time-intensive and error-prone. Implement error handling to manage interruptions.

When to Use Web Scraping

Web scraping is appropriate if:

- Page structure is consistent across sites: For example, a government site with date suffixes but a uniform layout.

- Manual data collection is prohibitive: For extensive text or embedded tables.

When feasible, consider alternatives like APIs or data-entry services (e.g., Amazon Mechanical Turk) for better efficiency and legal compliance.

What is an API?

An Application Programming Interface (API) is a set of protocols that allows computers to communicate and exchange information. A common type is a REST API (Representational State Transfer), where clients make stateless HTTP requests (for example, GET and POST) to resource URLs and receive structured responses, often in JSON. APIs provide standardized access to data, services, and functionalities, making them essential in software development.

When to Use an API

APIs are commonly used for:

- Integrating with Third-Party Services: APIs connect applications to services like payment gateways or social media.

- Accessing Data: APIs retrieve data from systems or databases (e.g., real-time weather data).

- Automating Tasks: APIs automate processes within applications, such as email marketing.

- Building New Applications: APIs allow developers to build new apps or services (e.g., a mapping API for navigation).

- Streamlining Workflows: APIs enable seamless communication and data exchange across systems.

Using Reddit API with RedditExtractoR

Reddit is a social media platform featuring a complex network of users and discussions, organized into “subreddits” by topic. RedditExtractoR, an R package, enables data extraction from Reddit to identify trends and analyze interactions.

# Install and load RedditExtractoR

install.packages("RedditExtractoR")

library(RedditExtractoR)

# Access data from the GenAI subreddit

GenAI_reddit <- find_thread_urls(subreddit = "GenAI", sort_by = "new", period = "day")

view(GenAI_reddit)Researchers can search online (or GitHub) to find packages that are specifically designed to capture other platforms, like the Reddit example here.

4.2.2 Audio Transcripts (Zoom, etc.)

Audio transcripts are a rich source of text data, especially useful for capturing spoken content from meetings, interviews, or webinars. Many platforms, such as Zoom, provide automated transcription services that can be downloaded as text files for analysis. By processing these transcripts, researchers can analyze conversation themes, sentiment, or other linguistic features. Here’s how to access and prepare Zoom transcripts for analysis in R.

Key Steps for Extracting Text Data from Audio Transcripts

- Access the Zoom Transcript

- Log in to your Zoom account.

- Navigate to the “Recordings” section.

- Select the recording you wish to analyze and download the “Audio Transcript” file.

- Import the Transcript into R

Once the file is downloaded, you can load it into R for analysis. Depending on the file format (usually a.txtfile with tab or comma delimiters), useread.table(),read.csv(), or functions from thereadrpackage to load the data.

# Load the transcript into R

transcript_data <- read.table("path/to/your/zoom_transcript.txt", sep = "\t", header = TRUE)Adjust the sep parameter based on the delimiter used in the transcript file (typically \t for tab-delimited files).

Data Cleaning (if necessary)

Clean up the text data to remove unnecessary characters, standardize formatting, and prepare it for further analysis.- Remove Unwanted Characters

Usegsub()to eliminate special characters and punctuation, keeping only alphanumeric characters and spaces.

# Remove special characters transcript_data$text <- gsub("[^a-zA-Z0-9 ]", "", transcript_data$text)- Convert Text to Lowercase

Standardize text to lowercase for consistency in text analysis.

- Remove Unwanted Characters

# Convert text to lowercase

transcript_data$text <- tolower(transcript_data$text)4.2.3 PDF

PDF files are a valuable source of text data, often found in research publications, government documents, and industry reports. We’ll explore two main methods for extracting text from PDFs:

Extracting from Local PDF Files: This method involves accessing and parsing text from PDF files stored locally, providing tools and techniques to efficiently retrieve text data from offline documents.

Downloading and Extracting PDF Files: This approach covers downloading PDFs from online sources and extracting their text. This method is useful for scraping publicly available documents from websites or databases for research purposes.

PDF Data Extractor (PDE)

For more advanced PDF text extraction and processing, you can use the PDF Data Extractor (PDE) package. This package provides tools for extracting text data from complex PDF documents, supporting additional customization options for text extraction. PDE is a R package that easily extracts information and tables from PDF files. The PDE_analyzer_i() performs the sentence and table extraction while the included PDE_reader_i() allows the user-friendly visualization and quick-processing of the obtained results.

Steps for Extracting Text from Local PDF Files

- Install and Load the

pdftoolsPackage

Start by installing and loading thepdftoolspackage, which is specifically designed for reading and extracting text from PDF files in R.

install.packages("pdftools")

library(pdftools)- Read the PDF as a Text File

Use thepdf_text()function to read the PDF file into R as a text object. This function returns each page as a separate string in a character vector.

txt <- pdf_text("path/to/your/file.pdf")- Extract Text from a Specific Page

To access a particular page from the PDF, specify the page number in the text vector. For example, to extract text from page 24:

page_text <- txt[24] # page 24- Extract Rows into a List

If the page contains a table or structured text, use thescan()function to read each row as a separate element in a list. ThetextConnection()function converts the page text for processing.

rows <- scan(textConnection(page_text), what = "character", sep = "\n")- Split Rows into Cells

To further parse each row, split it into cells by specifying the delimiter, such as whitespace (using"\\s+"). This converts each row into a list of individual cells.

row <- unlist(strsplit(rows[24], "\\s+")) # Example with the 24th rowSteps for Downloading and Extracting Text from PDF Files

- Download the PDF from the Web

Use thedownload.file()function to download the PDF file from a specified URL. Set the mode to"wb"(write binary) to ensure the file is saved correctly.

link <- paste0(

"http://www.singstat.gov.sg/docs/",

"default-source/default-document-library/",

"publications/publications_and_papers/",

"cop2010/census_2010_release3/",

"cop2010sr3.pdf"

)

download.file(link, "census2010_3.pdf", mode = "wb")- Read the PDF as a Text File

After downloading, read the PDF into R as a text object using thepdf_text()function from thepdftoolspackage. Each page of the PDF will be stored as a string in a character vector.

txt <- pdf_text("census2010_3.pdf")- Extract Text from a Specific Page

Access the desired page (e.g., page 24) by specifying the page number in the character vector.

page_text <- txt[24] # Page 24- Extract Rows into a List

Use thescan()function to split the page text into rows, with each row representing a line of text in the PDF. This creates a list where each line from the page is an element.

rows <- scan(textConnection(page_text), what = "character", sep = "\n")- Loop Through Rows and Extract Data

Starting from a specific row (e.g., row 7), loop over each row. For each row:- Split the text by spaces (

"\\s+") usingstrsplit(). - Convert the result to a vector with

unlist(). - If the third cell in the row is not empty, store the second cell as

nameand the third cell astotal, converting it to a numeric format after removing commas.

- Split the text by spaces (

name <- c()

total <- c()

for (i in 7:length(rows)) {

row <- unlist(strsplit(rows[i], "\\s+"))

if (!is.na(row[3])) {

name <- c(name, row[2])

total <- c(total, as.numeric(gsub(",", "", row[3])))

}

}4.2.4 Survey, Discussions, etc.

Surveys and discussion posts are valuable sources of text data in educational research, providing insights into participants’ perspectives, opinions, and experiences. These data sources often come from open-ended survey responses, online discussion boards, or educational platforms. Extracting and preparing text data from these sources can reveal recurring themes, sentiment, and other patterns that support both quantitative and qualitative analysis. Below are key steps and code examples for processing text data from surveys and discussions in R.

Key Steps for Processing Survey and Discussion Text Data

- Load the Data

Survey and discussion data are typically stored in spreadsheet formats like CSV. Begin by loading this data into R for processing. Here,readris used for reading CSV files withread_csv().

# Install and load necessary packages

install.packages("readr")

library(readr)

# Load data

survey_data <- read_csv("path/to/your/survey_data.csv")- Extract Text Columns

Identify and isolate the relevant text columns. For example, if the text data is in a column named “Response,” you can create a new vector for analysis.

# Extract text data from the specified column

text_data <- survey_data$ResponseData Cleaning

Prepare the text data by cleaning it, removing any unnecessary characters, and standardizing the text. This includes removing punctuation, converting text to lowercase, and handling extra whitespace.- Remove Unwanted Characters

Usegsub()from base R to remove any non-alphanumeric characters, retaining only words and spaces.

- Remove Unwanted Characters

# Remove special characters

text_data <- gsub("[^a-zA-Z0-9 ]", "", text_data)- Convert to Lowercase

Standardize the text by converting all characters to lowercase.

# Convert text to lowercase

text_data <- tolower(text_data)- Remove Extra Whitespace

Remove any extra whitespace that may be left after cleaning.

# Remove extra spaces

text_data <- gsub("\\s+", " ", text_data)- Tokenization and Word Counting (Optional)

If further analysis is needed, such as frequency-based analysis, split the text into individual words (tokenization) or count the occurrence of specific words. Here,dplyris used to organize the word counts.

# Install and load necessary packages

install.packages("dplyr")

library(dplyr)

# Tokenize and count words

word_count <- strsplit(text_data, " ") %>%

unlist() %>%

table() %>%

as.data.frame()4.3 Methods for Analyzing Text Data

In the following section, we will provide a “recipe” for the educational researcher interested in these new methods of analyzing text data to get you from the initial stages of getting the data to running the analyses and the write up. Often left out is also a research question that suits or requires a method. Since we have a data and method-centric approach here, we will backtrack and also provide a research question, so that you can model after it in your own work. Finally, we will provide a sample results and discussions section.

4.3.1 Frequency-Based Analysis

The purpose of the frequency-based approach is to count the number of words as they appear in a text file, whether it is a collection of tweets, documents, or interview transcripts. This approach aligns with the frequency-coding method (e.g., Saldaña ,2021) and can supplement human coding by revealing the most/least commonly occurring words, which can then be compared across dependent variables.

Case Study: Frequency-Based Analysis of GenAI Usage Guidelines in Higher Education

As AI writing tools like ChatGPT become more prevalent, educators are working to understand how best to integrate them within academic settings, while many students and instructors remain uncertain about acceptable use cases. Our research into AI usage guidelines from the top 100 universities in North America aims to identify prominent themes and concerns in institutional policies regarding GenAI.

4.3.1.1 Sample Research Questions

To investigate the nature of AI use policies within higher education institutions, our research questions are:

- RQ1: What are the most frequently mentioned words in university GenAI writing usage policies?

- RQ2: Which keywords reflect common concerns or focal points related to GenAI writing usage in academic settings?

4.3.1.2 Sample Methods

Data Source

The dataset consists of publicly available AI policy texts from 100 universities in the USA. The data was collected from institutional websites, including syllabus guidelines and academic integrity pages, and saved as a CSV file for analysis.

Data Analysis

To analyze the data, we employed a natural language processing (NLP) approach, focusing on word frequency and visualization. We used the tidytext package to tokenize the policy text and the ggplot2 and wordcloud packages to represent the results visually.

4.3.1.3 Analysis

Step 1: Load Required Libraries

Install and load libraries for data processing, visualization, and word cloud generation.

# Install necessary packages if not already installed

# install.packages(c("tibble", "dplyr", "tidytext", "ggplot2", "viridis", "tm", "wordcloud", "wordcloud2", "webshot"))

# Load libraries

library(readr)

library(tibble)

library(dplyr)

library(tidytext)

library(ggplot2)

library(viridis)

library(tm)

library(wordcloud)

library(wordcloud2)

library(webshot)Step 2: Load Data

Read the CSV file containing policy texts from the top 100 universities.

# Load the dataset

university_policies <- read_csv("data/University_GenAI_Policy_Stance.csv")Step 3: Tokenize Text and Count Word Frequency

Process the text data by tokenizing words, removing stop words, and counting word occurrences.

# Tokenize text, remove stop words, and count word frequencies

word_frequency <- university_policies |>

unnest_tokens(word, Stance) |> # Tokenize the 'Stance' column

anti_join(stop_words) |> # Remove common stop words

count(word, sort = TRUE) # Count and sort words by frequency

word_frequency# A tibble: 1,108 × 2

word n

<chr> <int>

1 ai 124

2 students 120

3 chatgpt 80

4 tools 73

5 academic 68

6 policy 35

7 assignments 34

8 instructors 34

9 integrity 33

10 student 32

# ℹ 1,098 more rowsStep 4: Create a Word Cloud

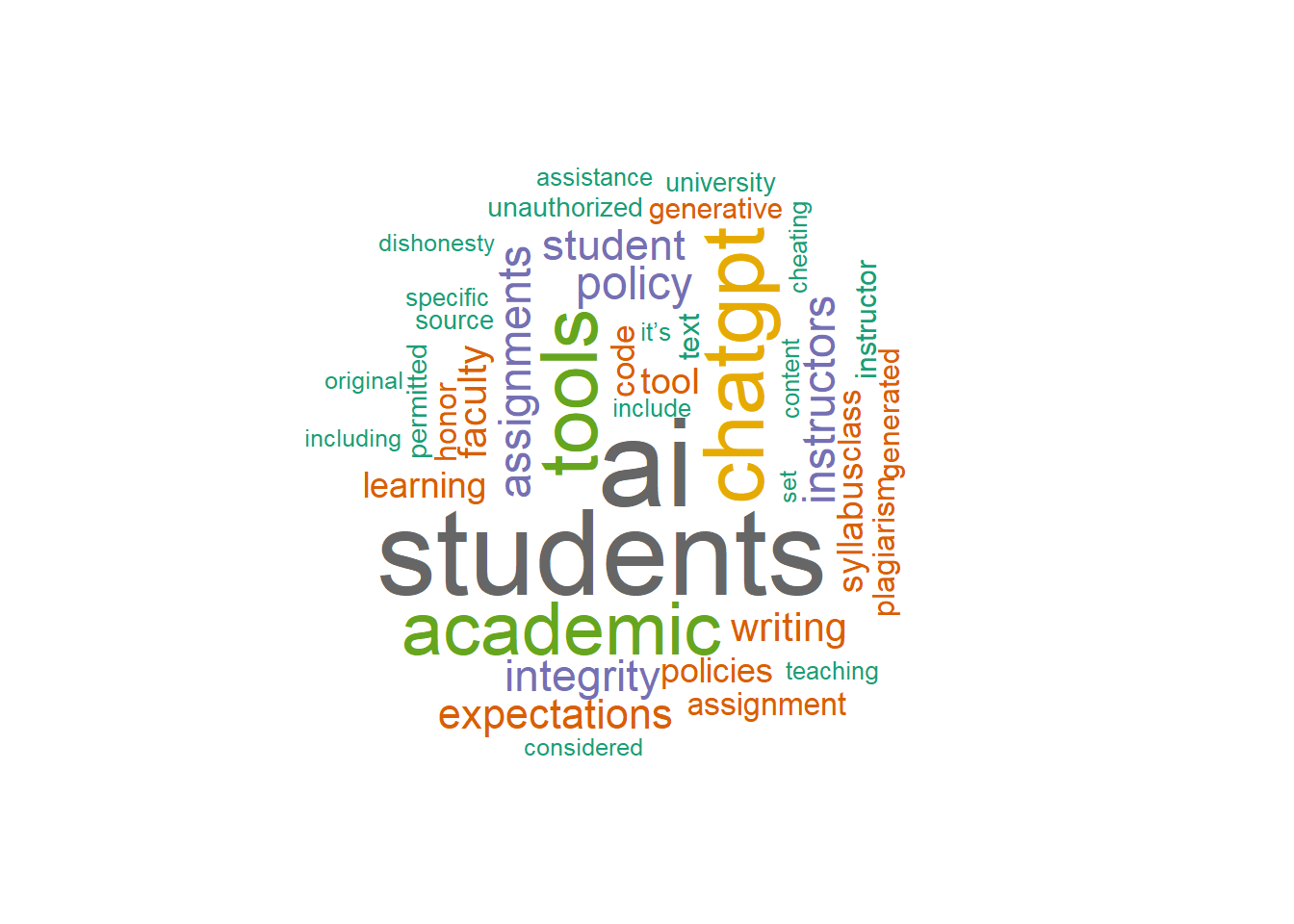

Generate a word cloud to visualize frequent words in an exploratory way. Word clouds are useful for quick pattern scanning, but they are less precise for comparing magnitudes across terms. For quantitative comparison, bar charts are usually more interpretable (Wilke, 2019).

# Create and display the GenAI usage Stance wordcloud

set.seed(1234) # For reproducibility

wordcloud(

words = word_frequency$word,

freq = word_frequency$n,

scale = c(4, 0.5),

random.order = FALSE,

min.freq = 10,

colors = brewer.pal(8, "Dark2"),

rot.per = 0.35

)

Step 5: Visualize Top 12 Words in University Policies

Select the top 12 most frequent words and create a bar chart to visualize the distribution.

# Select the top 12 words

top_words <- word_frequency |>

slice(1:12)

# Generate the bar chart

policy_word_chart <- ggplot(top_words, aes(x = reorder(word, n), y = n, fill = word)) +

geom_bar(stat = "identity") +

coord_flip() +

theme_minimal() +

labs(

title = "Top Words in University GenAI Policies",

x = NULL,

y = "Frequency",

caption = "Source: University AI Policy Text",

fill = "Word"

) +

scale_fill_viridis(discrete = TRUE) +

geom_text(aes(label = n), vjust = 0.5, hjust = -0.1, size = 3)

# Print the bar chart

print(policy_word_chart)

The methods used above can be written up as follows:

Sample Methods

To examine the lexical content of university generative AI policy stances, the text data were first tokenized into individual words and cleaned by removing stop words. Word occurrences were then counted across the corpus to produce a frequency table of the most common terms, following standard tidy text and text mining procedures for corpus-level term analysis.

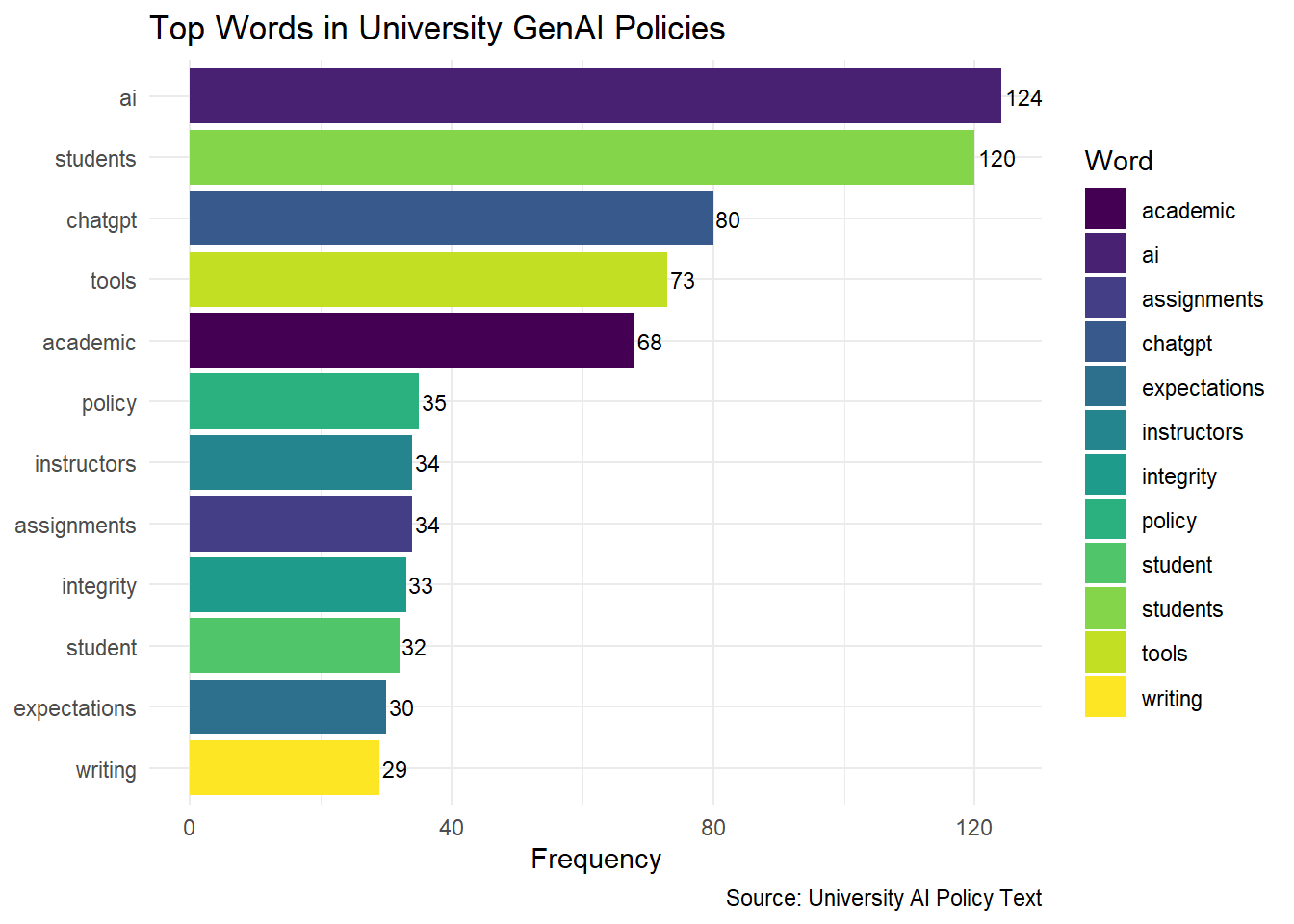

The resulting word-frequency distribution was visualized in two complementary ways. First, a word cloud was generated using the wordcloud package to provide a qualitative overview of term prominence, with larger words representing higher frequency and a fixed random seed used to ensure reproducibility. Second, the twelve most frequent words were displayed in a horizontal bar chart using ggplot2, which offers a clearer quantitative comparison of term counts than the word cloud alone. The bar chart was enhanced with frequency labels and a viridis fill scale to improve readability and distinguish terms visually.

This frequency-based approach provided a straightforward descriptive summary of the policy corpus and helped identify the most salient vocabulary used in institutional GenAI guidance.

4.3.1.4 Results and Discussion

RQ1: What are the most frequently mentioned words in university GenAI writing usage policies?

The results of the frequency analysis showed that keywords such as “assignment,” “student,” and “writing” were among the most commonly mentioned terms across AI policies at 100 universities. This emphasis reflects a focus on using AI tools to support student learning and enhance teaching content. The frequent mention of these words suggests that institutions are considering the role of AI in academic assignments and course design, indicating a strategic commitment to integrating AI within educational tasks and student interactions.RQ2: Which keywords reflect common concerns or focal points related to GenAI writing usage in academic settings?

The analysis of the top 12 frequently mentioned terms highlighted additional focal points, including “tool,” “academic,” “instructor,” “integrity,” and “expectations.” These terms reveal concerns around the ethical use of AI tools, the need for clarity in academic applications, and the central role of instructors in AI policy implementation. Keywords like “integrity” and “expectations” emphasize the importance of maintaining academic standards and setting clear guidelines for AI use in classrooms, while “instructor” underscores the influence faculty members have in shaping AI-related practices. Together, these terms reflect a commitment to transparent policies that support ethical and effective AI integration, enhancing the academic experience for students.

4.3.2 Dictionary-based Analysis

4.3.2.1 Purpose

The purpose of dictionary-based analysis in text data is to assess the presence of predefined categories, like emotions or sentiments, within the text using lexicons or dictionaries. This approach allows researchers to quantify qualitative aspects, such as positive or negative sentiment, based on specific words that correspond to these categories.

Case:

In this analysis, we examine the stance of 100 universities on the use of GenAI by applying the Bing sentiment dictionary. By analyzing sentiment scores, we aim to identify the general tone in these policies, indicating whether the institutions’ attitudes toward GenAI are predominantly positive or negative.

4.3.2.2 Sample Research Questions

- RQ: What is the dominant sentiment expressed in GenAI policy texts across universities, and is it primarily positive or negative?

4.3.2.3 Analysis

Step 1: Install and Load Necessary Libraries

First, install and load the required packages for text processing and visualization.

# Install necessary packages if not already installed

# install.packages(c("tidytext", "tidyverse", "dplyr", "ggplot2", "tidyr"))

# Load libraries

library(tidytext)

library(tidyverse)

library(dplyr)

library(ggplot2)

library(tidyr)Step 2: Load and Prepare Data

Load the GenAI policy stance data from a CSV file. We use the same data as in Section 2.3.

# Load the dataset

university_policies <- read_csv("data/University_GenAI_Policy_Stance.csv")

# Tokenize text, remove stop words, and count word frequencies

word_frequency <- university_policies |>

unnest_tokens(word, Stance) |> # Tokenize the 'Stance' column

anti_join(stop_words) |> # Remove common stop words

count(word, sort = TRUE) # Count and sort words by frequency

word_frequency# A tibble: 1,108 × 2

word n

<chr> <int>

1 ai 124

2 students 120

3 chatgpt 80

4 tools 73

5 academic 68

6 policy 35

7 assignments 34

8 instructors 34

9 integrity 33

10 student 32

# ℹ 1,098 more rowsStep 3: Tokenize Text Data and Apply Sentiment Dictionary

Tokenize the policy text data to separate individual words. Then, use the Bing sentiment dictionary to label each word as positive or negative.

# Tokenize 'Stance' column and apply Bing sentiment dictionary

sentiment_scores <- word_frequency |>

inner_join(get_sentiments("bing")) |> # Join with Bing sentiment lexicon

pivot_wider(names_from = sentiment, values_from = n, values_fill = 0) |>

mutate(sentiment_score = positive - negative) # Calculate net sentiment score

sentiment_scores# A tibble: 142 × 4

word positive negative sentiment_score

<chr> <int> <int> <int>

1 honor 18 0 18

2 cheating 0 11 -11

3 dishonesty 0 10 -10

4 guidance 9 0 9

5 honesty 7 0 7

6 intelligence 7 0 7

7 transparent 7 0 7

8 violation 0 7 -7

9 encourage 6 0 6

10 difficult 0 5 -5

# ℹ 132 more rowsStep 4: Create a Density Plot for Sentiment Distribution

Visualize the distribution of sentiment scores with a density plot, showing the prevalence of positive and negative sentiments across university policies.

# Generate a density plot of sentiment scores

density_plot <- ggplot(sentiment_scores, aes(x = sentiment_score, fill = sentiment_score > 0)) +

geom_density(alpha = 0.5) +

scale_fill_manual(

values = c("red", "green"),

name = "Sentiment",

labels = c("Negative", "Positive")

) +

labs(

title = "Density Plot of University AI Policy Sentiment",

x = "Sentiment Score",

y = "Density",

caption = "Source: University Policy Text"

) +

theme_minimal() +

theme(

plot.title = element_text(face = "bold", size = 14),

axis.text = element_text(size = 14),

axis.title = element_text(face = "bold", size = 14),

plot.caption = element_text(size = 12)

)

# Print the plot

print(density_plot)

The methods used above can be written up as follows:

Sample Methods

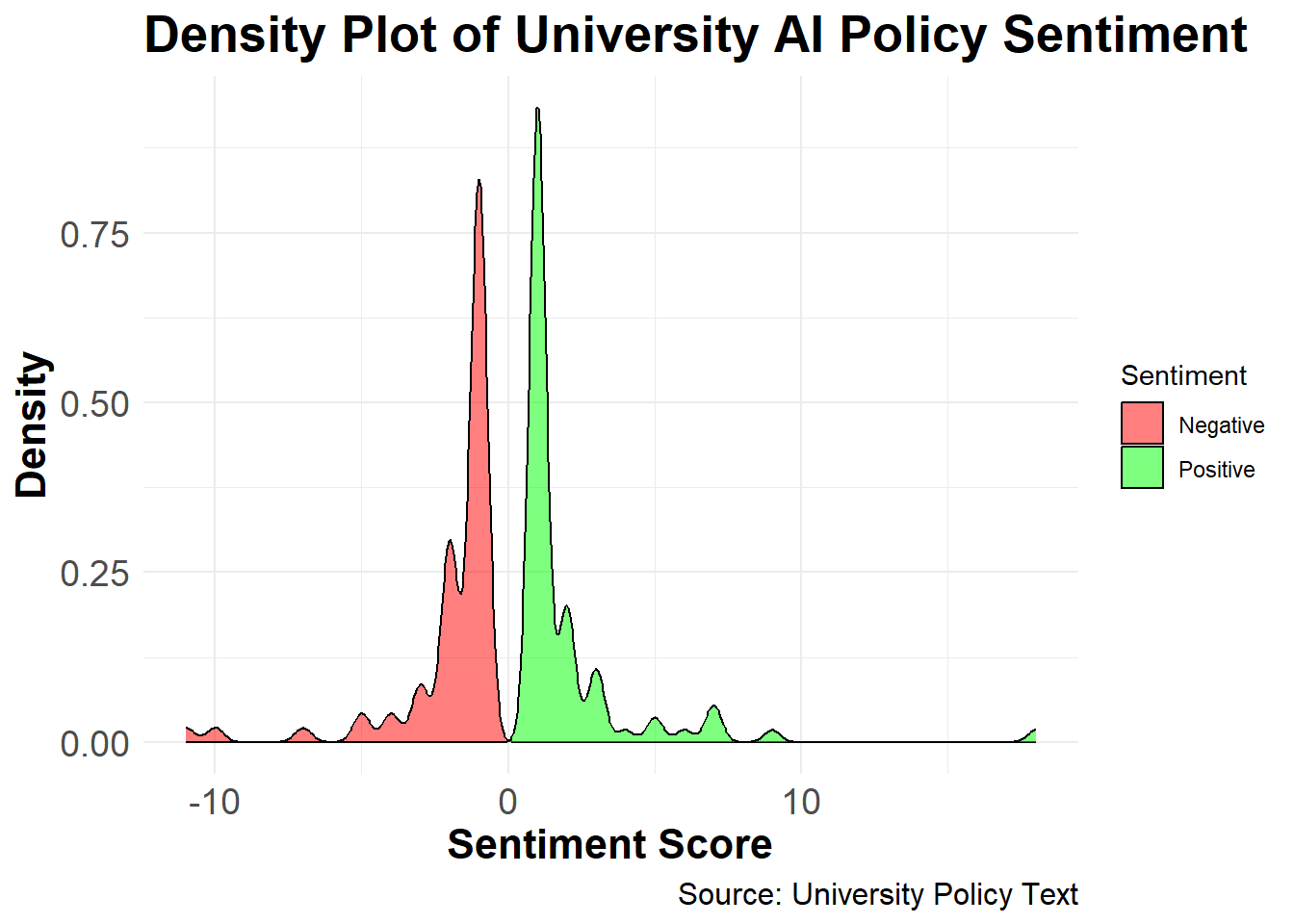

To assess the emotional valence of university policy statements on generative artificial intelligence, the text data were first tokenized into individual words and cleaned by removing stop words. Word frequencies were then computed across the corpus to identify the most commonly used terms in the policy stance texts. This approach follows standard tidy text practices for descriptive text mining and term-frequency analysis.

Sentiment analysis was conducted using the Bing sentiment lexicon implemented in the tidytext package. The Bing lexicon classifies words as either positive or negative, making it well suited for binary polarity assessment in policy discourse. Word-frequency data were joined with the Bing dictionary using

inner_join(), and the sentiment categories were reshaped into a wide format so that positive and negative counts could be compared directly. A net sentiment score was then calculated as the difference between positive and negative word counts, with positive values indicating more favorable tone and negative values indicating more unfavorable tone.This dictionary-based method provides a transparent and reproducible way to quantify sentiment in textual policy documents. It is particularly useful for exploratory analysis because it captures broad polarity patterns while remaining easy to interpret and replicate across documents.

4.3.2.4 Results and Discussion

RQ: What is the dominant sentiment expressed in GenAI policy texts across universities, and is it primarily positive or negative?

The dictionary-based sentiment analysis reveals the prevailing sentiments in university policies on GenAI usage. Using the Bing lexicon to assign positive and negative scores, the density plot illustrates the distribution of sentiment scores across the 100 institutions.The results indicate a balanced perspective with a slight tendency toward positive sentiment, as reflected by a higher density of positive scores. This analysis provides insights into the varying degrees of acceptance and caution universities adopt in their AI policy frameworks, demonstrating the diverse stances that shape institutional AI guidelines.

4.3.3 Clustering-Based Analysis

Clustering-based analysis involves grouping similar text documents or text segments into clusters based on their underlying topics or themes. This approach is particularly useful for identifying dominant themes in text data, such as university AI policy documents.

4.3.3.1 Purpose

Purpose: The goal of clustering-based analysis is to uncover latent themes in text data using unsupervised machine learning techniques. Topic modeling is one popular method for clustering documents into groups based on their content.

Case: Using the GenAI policy texts from 100 universities, we apply Latent Dirichlet Allocation (LDA) to identify dominant themes in these policy documents. This analysis will help categorize policies into overarching themes, such as academic integrity, student support, and instructor discretion.

4.3.3.2 Sample Research Questions

- RQ1: What are the prominent themes present in university policies regarding GenAI usage Stance?

- RQ2: How do these themes reflect the key concerns or opportunities for integrating GenAI in higher education?

4.3.3.3 Analysis

Step 1: Install and Load Necessary Libraries

Install and load the required libraries for text processing and topic modeling.

# Install necessary packages

# install.packages(c("dplyr", "tidytext", "topicmodels", "ggplot2"))

# Load libraries

library(dplyr)

library(tidytext)

library(topicmodels)

library(ggplot2)Step 2: Prepare the Data

Load the data and create a document-term matrix (DTM) for topic modeling.

# Load the dataset

university_policies <- read_csv("data/University_GenAI_Policy_Stance.csv")

# Tokenize text data and count word frequencies by document (university)

# We add a unique ID for each policy to treat it as a document

university_policies <- university_policies |>

mutate(policy_id = row_number())

word_counts <- university_policies |>

unnest_tokens(word, Stance) |>

anti_join(stop_words) |>

count(policy_id, word, sort = TRUE)

# Create Document-Term Matrix

gpt_dtm <- word_counts |>

cast_dtm(document = policy_id, term = word, value = n)

gpt_dtm<<DocumentTermMatrix (documents: 99, terms: 1108)>>

Non-/sparse entries: 2646/107046

Sparsity : 98%

Maximal term length: 17

Weighting : term frequency (tf)# Define range of k values

k_values <- c(2, 3, 4, 5)

# Initialize a data frame to store perplexities

perplexities <- data.frame(k = integer(), perplexity = numeric())

# Calculate perplexity for each k

for (k in k_values) {

lda_model <- LDA(gpt_dtm, k = k, control = list(seed = 1234)) # Fit LDA model

perplexity_score <- perplexity(lda_model, gpt_dtm) # Calculate perplexity

perplexities <- rbind(perplexities, data.frame(k = k, perplexity = perplexity_score))

}

# Plot perplexity vs number of topics

ggplot(perplexities, aes(x = k, y = perplexity)) +

geom_line() +

geom_point() +

labs(

title = "Perplexity vs Number of Topics",

x = "Number of Topics (k)",

y = "Perplexity"

) +

theme_minimal()Step 3: Fit the LDA Model

Fit an LDA model with k = 3 topics.

# Fit the LDA model

lda_model <- LDA(gpt_dtm, k = 3, control = list(seed = 1234))

# View model results

gpt_policy_topics_k3 <- tidy(lda_model, matrix = "beta")

print(gpt_policy_topics_k3)# A tibble: 3,324 × 3

topic term beta

<int> <chr> <dbl>

1 1 ai 0.0565

2 2 ai 0.0182

3 3 ai 0.0450

4 1 tools 0.0455

5 2 tools 0.00904

6 3 tools 0.0136

7 1 students 0.0504

8 2 students 0.0212

9 3 students 0.0446

10 1 chatgpt 0.0216

# ℹ 3,314 more rowsStep 4: Visualize Topics

Extract the top terms for each topic and visualize them.

# Visualizing top terms for each topic

gpt_policy_ap_top_terms_k3 <- gpt_policy_topics_k3 |>

group_by(topic) |>

slice_max(beta, n = 10) |>

ungroup() |>

arrange(topic, -beta)

gpt_policy_ap_top_terms_k3 |>

mutate(term = reorder_within(term, beta, topic)) |>

ggplot(aes(beta, term, fill = factor(topic))) +

geom_col(show.legend = FALSE) +

facet_wrap(~ topic, scales = "free") +

scale_y_reordered() +

labs(

title = "Top Terms per Topic (LDA)",

subtitle = "Analysis of University GenAI Policies",

x = "Beta (Probability of term belonging to topic)",

y = NULL

) +

theme_minimal()

The methods used above can be written up as follows:

Sample Methods

Data Preparation

Text data were obtained from the University GenAI Policy Stance dataset, which contains institutional policy statements related to the adoption and regulation of generative artificial intelligence (GenAI). Each policy document was assigned a unique identifier to facilitate document-level analysis. Text preprocessing was conducted in R using the tidytext framework (Silge & Robinson, 2016). The preprocessing steps included tokenization into unigrams, removal of stop words using the tidytext stop word lexicon, and aggregation of word counts by document. The resulting term frequencies were converted into a document–term matrix (DTM), which served as the input for topic modeling.

Topic Modeling Procedure

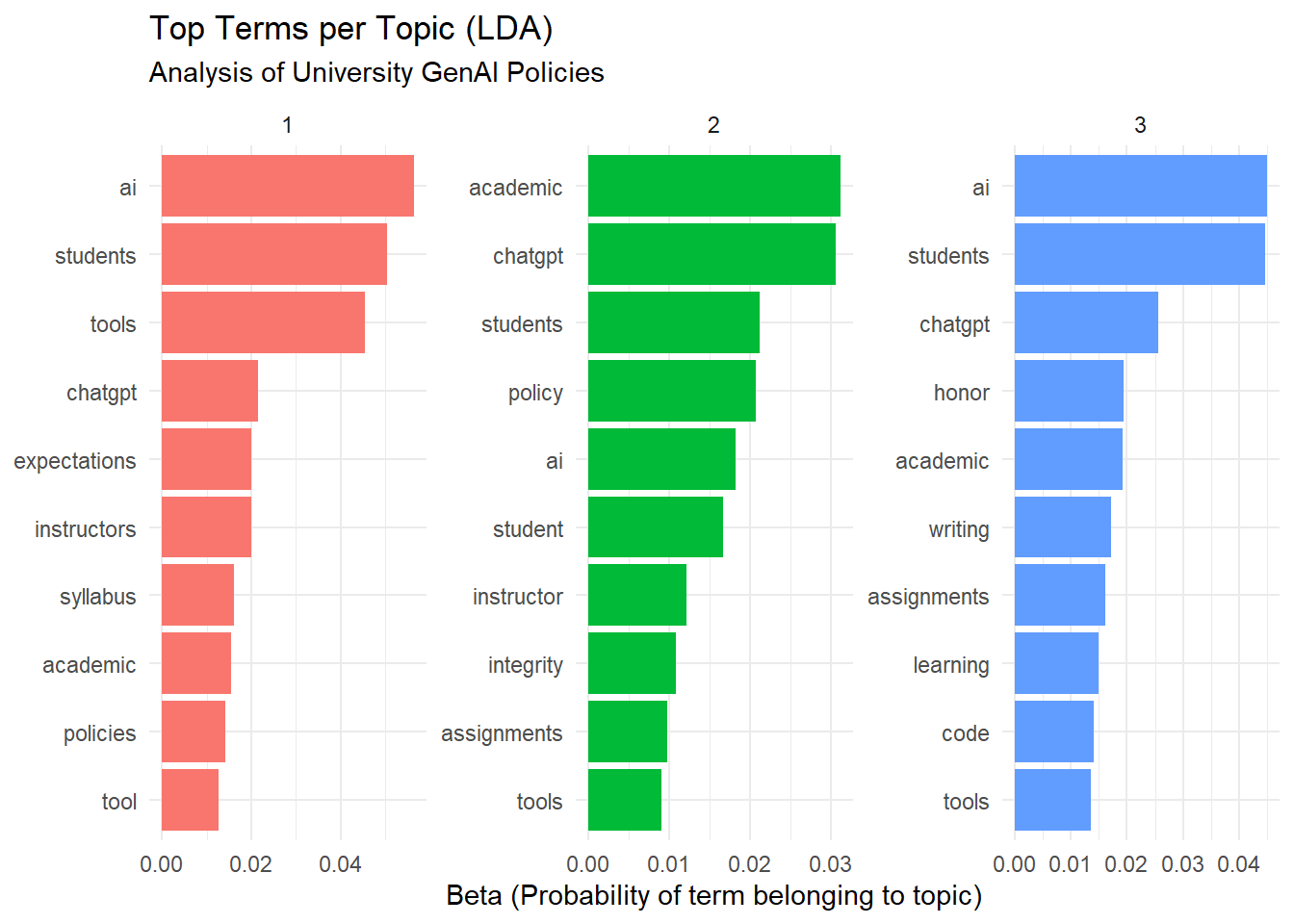

Latent Dirichlet Allocation (LDA) was employed to identify latent themes within the policy texts (Blei et al., 2003), implemented via the topicmodels package (Grün & Hornik, 2011). To determine the optimal number of topics, a range of models with k=2,3,4,k=2,3,4, and 55 topics was fitted, and each model’s perplexity score—a standard measure of model fit in probabilistic topic modeling—was computed. A line plot of perplexity values against topic numbers was used to identify the point of diminishing returns. The model with three topics (k=3k=3) exhibited a balanced trade-off between interpretability and model fit and was selected for further analysis.

Model Fitting and Interpretation

An LDA model with k=3k=3 topics was fitted using a fixed random seed to ensure replicability. The resulting topic-term probabilities (ββ) were extracted and organized to identify the most representative words for each topic. The top ten terms per topic were visualized using ggplot2, allowing for intuitive comparison of thematic content across topics. This visualization facilitated qualitative interpretation of the emergent themes and enabled labeling based on the semantic coherence of high-probability terms.

Computational Environment

All analyses were conducted using R version 4.x within the tidyverse ecosystem. The following packages were utilized: dplyr for data manipulation, tidytext for tokenization and text processing, topicmodels for LDA modeling, and ggplot2 for visualizations. The analysis was executed on a standard workstation environment to ensure reproducibility and computational efficiency.

4.3.3.4 Results and Discussion

Research Question 1:

What are the prominent themes present in university policies regarding GenAI usage?

Answer:

The topic modeling analysis revealed three distinct themes in the university GenAI policies:

Theme 1: Student-Centric Guidelines and Ethical Considerations

Key terms:

students,integrity,tools,instructors,assignmentThis theme emphasizes student usage of GenAI in academic settings, with a focus on ethics (

integrity) and guidelines for instructors to manage assignments involving AI tools.

Theme 2: Academic Standards and Faculty Expectations

Key terms:

students,academic,faculty,honor,expectationsThis theme focuses on maintaining academic integrity and clarifying expectations for faculty and students regarding GenAI usage in assignments and assessments.

Theme 3: Policy-Level Governance and Technology Integration

Key terms:

ai,tools,policy,learning,generativeThis theme revolves around institutional policies on AI integration, highlighting broader governance strategies and how generative AI (like GenAI) fits into learning environments.

Research Question 2:

How do these themes reflect the key concerns or opportunities for integrating GenAI in higher education?

Answer:

The identified themes reflect both concerns and opportunities:

Concerns:

Theme 1: Highlights the ethical challenges, such as ensuring academic integrity when students use AI tools in their coursework. Institutions are keen on setting clear guidelines for both students and instructors to avoid misuse.

Theme 2: Underlines the potential for conflict between maintaining academic standards (

honor,expectations) and leveraging AI to support learning. This shows a cautious approach to integrating AI while upholding traditional values.Theme 3: Raises policy-level questions on AI governance, such as whether existing institutional frameworks are adequate to regulate emerging generative AI technologies.

Opportunities:

Theme 1: Presents a chance to redefine how students interact with AI tools to foster responsible and innovative usage, particularly for assignments and creative tasks.

Theme 2: Encourages collaboration between faculty and administration to develop robust expectations and support systems for integrating AI in the classroom.

Theme 3: Offers a strategic opportunity for universities to lead in AI adoption by establishing comprehensive policies that guide AI’s role in education and research.

Discussion:

The topic modeling results suggest that universities are navigating a complex landscape of opportunities and challenges as they incorporate GenAI into academic contexts. While student-centric policies aim to balance innovation with ethical considerations, institutional-level themes signal the need for governance frameworks to ensure responsible AI use. These findings indicate that higher education institutions are positioned to play a pivotal role in shaping the future of generative AI in learning, provided they address the ethical, pedagogical, and policy challenges identified in this analysis.

References:

Saldaña, J. (2021). Coding techniques for quantitative and mixed data. The Routledge reviewer’s guide to mixed methods analysis, 151-160.

4.4 Summary

This chapter demonstrated three approaches to computational text analysis — frequency-based analysis, sentiment analysis, and topic modeling — each applied to GenAI usage guidelines from U.S. universities. Together, these methods illustrate how educational researchers can systematically analyze large text corpora using R. Readers wishing to deepen their understanding can explore the following resources:

Text Mining and Topic Modeling

Silge, J., & Robinson, D. (2017). Text Mining with R: A Tidy Approach (free online book; Chapters 2–3 on sentiment and 3–4 on topic modeling).

Blei, D. M., Ng, A. Y., & Jordan, M. I. (2003). Latent Dirichlet Allocation (foundational paper on LDA).

R‑Based Text Analysis

CRAN documentation for

tidytext,topicmodels,dplyr, andggplot2, which together form the core toolkit used in this analysis.Online tutorials titled “topic modeling with tidytext and LDA” or “word frequency and word clouds in R” for step‑by‑step implementation.

Sentiment and Lexicon‑Based Methods

Liu, B. (2012). Sentiment Analysis and Opinion Mining (introductory overview of dictionary‑based and machine‑learning approaches).

Documentation for the Bing sentiment lexicon and VADER, which underpin the polarity‑score methods used here.

Practice‑Oriented Extensions

- Open‑source R notebooks or blog posts on “university policy text analysis,” “sentiment analysis of policy documents,” or “topic modeling of educational texts” to see closely related applied examples.