# Install and load the OECD package

# install.packages("OECD")

library(OECD)

# Fetch PISA data for the 2022 cycle (Example)

pisa_data <- get_dataset("PISA_2022")

# Display a summary of the data

summary(pisa_data)Numeric Data

6.1 Overview

In educational research, data has traditionally been sourced from small-scale surveys, experiments, or qualitative studies. However, the rise of large-scale digital data offers researchers opportunities to explore numeric and quantitative datasets of unprecedented scale and variety (Baker & Inventado, 2014; Romero & Ventura, 2010, 2020). This chapter discusses how to access and analyze two prominent examples: international assessments (e.g., PISA, TIMSS, NAEP) and digital log-trace data such as the Open University Learning Analytics Dataset, or OULAD (Kuzilek et al., 2017). Studies such as Teig et al. (2022) illustrate how large-scale assessment data can be used to investigate substantive educational questions at scale. These secondary data sources enable novel research questions and methods, particularly when paired with machine learning and statistical modeling approaches (James et al., 2021).

Portions of this chapter adapt instructional and research resources developed through the NSF-supported Learning Analytics in STEM Education Research (LASER) Institute. See the Preface for the full acknowledgment and disclaimer.

6.2 Accessing Big Data (Broadening the Horizon)

6.2.1 Big Data

Accessing PISA Data

The Programme for International Student Assessment (PISA) is a widely used dataset for large-scale educational research. It assesses 15-year-old students’ knowledge and skills in reading, mathematics, and science across multiple countries. Researchers can access PISA data through various methods:

1. Direct Download from the Official Website

The OECD provides direct access to PISA data files via its official website. Researchers can download data for specific years and cycles. Data files are typically provided in .csv or .sav (SPSS) formats, along with detailed documentation.

- Steps to Access PISA Data from the OECD Website:

- Visit the OECD PISA website.

- Navigate to the “Data” section.

- Select the desired assessment year (e.g., 2022).

- Download the data and accompanying codebooks.

2. Using the OECD R Package

The OECD R package provides a direct interface to download and explore datasets published by the OECD, including PISA.

- Steps to Use the

OECDPackage:- Install and load the

OECDpackage. - Use the

get_datasets()andget_dataset()functions (note: the API may vary by package version).

- Install and load the

3. Using the EdSurvey R Package

The EdSurvey package is designed specifically for analyzing large-scale assessment data, including PISA. It allows for complex statistical modeling and supports handling weights and replicate weights used in PISA.

- Steps to Use the

EdSurveyPackage:- Install and load the

EdSurveypackage. - Download the PISA data from the OECD website and provide the path to the

.savfiles. - Load the data into R using

readPISA().

- Install and load the

# Install and load the EdSurvey package

# install.packages("EdSurvey")

library(EdSurvey)

# Read PISA data from a local file

pisa_data <- readPISA("path/to/PISA2022Student.sav")

# Display the structure of the dataset

str(pisa_data)Comparison of Methods

| Method | Advantages | Disadvantages |

|---|---|---|

| Direct Download | Full access to all raw data and documentation. | Requires manual processing and cleaning. |

OECD Package |

Easy to use for downloading specific datasets. | Limited to OECD-published formats. |

EdSurvey Package |

Supports advanced statistical analysis and weights. | Requires additional setup and dependencies. |

Accessing IPEDS Data

The Integrated Postsecondary Education Data System (IPEDS) is a comprehensive source of data on U.S. colleges, universities, and technical and vocational institutions. It provides data on enrollments, completions, graduation rates, faculty, finances, and more. Researchers and policymakers widely use IPEDS data to analyze trends in higher education.

There are several ways to access IPEDS data, depending on the user’s needs and technical proficiency.

1. Direct Download from the NCES Website

The most straightforward way to access IPEDS data is by downloading it directly from the National Center for Education Statistics (NCES) website.

- Steps to Access IPEDS Data:

- Visit the IPEDS Data Center.

- Click on “Use the Data” and navigate to the “Download IPEDS Data Files” section.

- Select the desired data year and survey component (e.g., Fall Enrollment, Graduation Rates).

- Download the data files, typically provided in

.csvor.xlsformat, along with accompanying codebooks.

2. Using the ipeds R Package

The ipeds R package simplifies downloading and analyzing IPEDS data directly from R by connecting to the NCES data repository.

- Steps to Use the

ipedsPackage:- Install and load the

ipedspackage. - Use the

download_ipeds()function to fetch data for specific survey components and years.

- Install and load the

# Install and load the ipeds package

# install.packages("ipeds")

library(ipeds)

# Download IPEDS data for completions in 2021

ipeds_data <- download_ipeds("C", year = 2021)

# View the structure of the downloaded data

str(ipeds_data)3. Using the tidycensus R Package

The tidycensus package, while primarily designed for Census data, can access specific IPEDS data linked to educational institutions.

- Steps to Use the

tidycensusPackage:- Install and load the

tidycensuspackage. - Set up a Census API key to access the data.

- Query IPEDS data for specific institution-level information.

- Install and load the

# Install and load the tidycensus package

# install.packages("tidycensus")

library(tidycensus)

# Set Census API key (replace with your actual key)

census_api_key("your_census_api_key")

# Fetch IPEDS-related data (e.g., institution information)

ipeds_institutions <- get_acs(

geography = "place",

variables = "B14002_003",

year = 2021,

survey = "acs5"

)

# View the first few rows

head(ipeds_institutions)4. Using Online Tools

IPEDS provides several online tools for querying and visualizing data without requiring programming skills.

- Common Tools:

- IPEDS Data Explorer: Enables users to query and export customized datasets.

- Trend Generator: Allows users to visualize trends in key metrics over time.

- IPEDS Use the Data: Simplified tool for accessing pre-compiled datasets.

- Steps to Use the IPEDS Data Explorer:

- Visit the IPEDS Data Explorer.

- Select variables of interest, such as institution type, enrollment size, or location.

- Filter results by years, institution categories, or other criteria.

- Export the results as a

.csvor.xlsxfile.

Comparison of Methods

| Method | Advantages | Disadvantages |

|---|---|---|

| Direct Download | Full access to raw data and documentation. | Requires manual data preparation and cleaning. |

ipeds Package |

Automated access to specific components. | Limited flexibility for customized queries. |

tidycensus Package |

Allows integration with Census and ACS data. | Requires API setup and advanced R skills. |

| Online Tools | User-friendly and suitable for non-coders. | Limited to predefined queries and exports. |

Accessing Open University Learning Analytics Dataset (OULAD)

The Open University Learning Analytics Dataset (OULAD) is a publicly available dataset designed to support research in educational data mining and learning analytics. It includes student demographics, module information, interactions with the virtual learning environment (VLE), and assessment scores.

- Steps to Access OULAD Data:

- Visit the OULAD Repository.

- Download the dataset as a

.zipfile. - Extract the

.zipfile to a local directory.

The dataset contains multiple CSV files: - studentInfo.csv: Student demographics and performance data. - studentVle.csv: Interactions with the VLE. - vle.csv: Details of learning resources. - studentAssessment.csv: Assessment scores.

Loading OULAD Data in R

Once the data is downloaded and extracted, follow these steps to load and access it in R:

# Install necessary packages

# install.packages(c("readr", "dplyr"))

library(readr)

library(dplyr)

# Define the path to the OULAD data

data_path <- "path/to/OULAD/"

# Load individual CSV files

student_info <- read_csv(file.path(data_path, "studentInfo.csv"))

student_vle <- read_csv(file.path(data_path, "studentVle.csv"))

vle <- read_csv(file.path(data_path, "vle.csv"))

student_assessment <- read_csv(file.path(data_path, "studentAssessment.csv"))

# Inspect the structure and contents

head(student_info)

str(student_vle)6.3 Logistic Regression & Machine Learning

6.3.1 Purpose and Case

Purpose

Logistic regression is a supervised learning technique widely used for binary classification tasks. It models the probability of an event occurring (e.g., success vs. failure) based on a set of predictor variables. Logistic regression is particularly effective in educational research for predicting outcomes such as retention, enrollment, or graduation rates.

Case Study: Predicting Graduation Rates

This case study is based on IPEDS data and inspired by Zong and Davis (2022). We predict graduation rates as a binary outcome (good_grad_rate) using institutional features such as total enrollment, admission rate, tuition fees, and average instructional staff salary.

6.3.2 Sample Research Questions

- RQ A: What institutional factors are associated with high graduation rates in U.S. four-year universities?

- RQ B: How accurately can we predict high graduation rates using institutional features with supervised machine learning?

6.3.3 Analysis

Step 1: Load Required Packages

We load necessary R packages for data wrangling, cleaning, and modeling.

# Load necessary libraries for data cleaning, wrangling, and modeling

library(tidyverse) # For data manipulation and visualization

library(tidymodels) # For machine learning workflows

library(janitor) # For cleaning variable namesStep 2: Load and Clean Data

We read the IPEDS dataset and clean column names for easier handling.

# Read in IPEDS data from CSV file

ipeds <- read_csv("data/ipeds-all-title-9-2022-data.csv")

# Clean column names for consistency and usability

ipeds <- ipeds |>

clean_names()Step 3: Data Wrangling

Select relevant variables, filter the dataset, and create the dependent variable good_grad_rate.

# Select and rename key variables; filter relevant institutions

ipeds <- ipeds |>

select(

name = institution_name, # Institution name

total_enroll = drvef2022_total_enrollment, # Total enrollment

pct_admitted = drvadm2022_percent_admitted_total, # Admission percentage

tuition_fees = drvic2022_tuition_and_fees_2021_22, # Tuition fees

grad_rate = drvgr2022_graduation_rate_total_cohort, # Graduation rate

# Financial aid

percent_fin_aid =

sfa2122_percent_of_full_time_first_time_undergraduates_awarded_any_financial_aid,

# Staff salary

avg_salary =

drvhr2022_average_salary_equated_to_9_months_of_full_time_instructional_staff_all_ranks

) |>

filter(!is.na(grad_rate)) |> # Remove rows with missing graduation rates

mutate(

# Create binary dependent variable for high graduation rates (median split or threshold)

good_grad_rate = if_else(grad_rate > 62, 1, 0),

good_grad_rate = as.factor(good_grad_rate) # Convert to factor for classification

)Step 4: Exploratory Data Analysis (EDA)

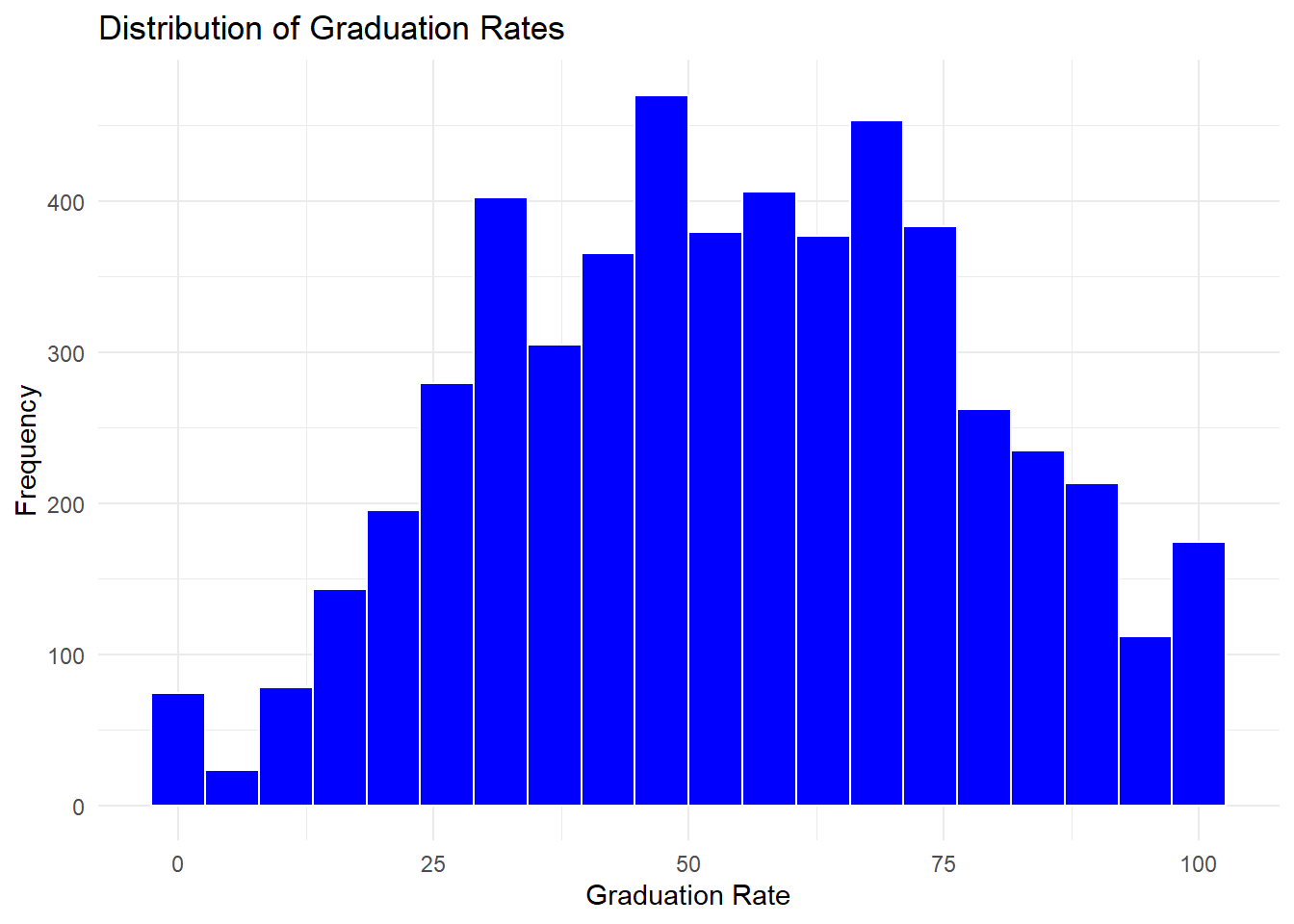

Visualize the distribution of the graduation rate.

# Plot a histogram of graduation rates

ipeds |>

ggplot(aes(x = grad_rate)) +

geom_histogram(bins = 20, fill = "blue", color = "white") +

theme_minimal() +

labs(

title = "Distribution of Graduation Rates",

x = "Graduation Rate",

y = "Frequency"

)

Step 5: Logistic Regression Model

Fit a logistic regression model to predict high graduation rates.

# Fit logistic regression model

m1 <- glm(

good_grad_rate ~ total_enroll + pct_admitted + tuition_fees + percent_fin_aid + avg_salary,

data = ipeds,

family = "binomial" # Specify logistic regression for binary outcome

)

# View model summary

summary(m1)

Call:

glm(formula = good_grad_rate ~ total_enroll + pct_admitted +

tuition_fees + percent_fin_aid + avg_salary, family = "binomial",

data = ipeds)

Coefficients:

Estimate Std. Error z value Pr(>|z|)

(Intercept) -8.742e-01 6.237e-01 -1.402 0.161

total_enroll 3.350e-05 7.880e-06 4.251 2.13e-05 ***

pct_admitted -1.407e-02 3.519e-03 -3.997 6.40e-05 ***

tuition_fees 6.952e-05 4.965e-06 14.003 < 2e-16 ***

percent_fin_aid -2.960e-02 5.652e-03 -5.237 1.64e-07 ***

avg_salary 2.996e-05 3.870e-06 7.740 9.91e-15 ***

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

(Dispersion parameter for binomial family taken to be 1)

Null deviance: 2277 on 1706 degrees of freedom

Residual deviance: 1632 on 1701 degrees of freedom

(3621 observations deleted due to missingness)

AIC: 1644

Number of Fisher Scoring iterations: 5Step 6: Supervised Machine Learning Workflow

Use the tidymodels framework to build a machine learning model.

# Define recipe for the model (preprocessing steps)

my_rec <- recipe(good_grad_rate ~ total_enroll + pct_admitted + tuition_fees + percent_fin_aid + avg_salary, data = ipeds)

# Specify logistic regression model with tidymodels

my_mod <- logistic_reg() |>

set_engine("glm") |> # Use glm engine for logistic regression

set_mode("classification") # Specify binary classification task

# Create workflow to connect recipe and model

my_wf <- workflow() |>

add_recipe(my_rec) |>

add_model(my_mod)

# Fit the logistic regression model

fit_model <- fit(my_wf, ipeds)

# Generate predictions on the dataset

predictions <- predict(fit_model, ipeds) |>

bind_cols(ipeds) # Combine predictions with original data

# Calculate and display accuracy

my_accuracy <- predictions |>

metrics(truth = good_grad_rate, estimate = .pred_class) |>

filter(.metric == "accuracy")

my_accuracy# A tibble: 1 × 3

.metric .estimator .estimate

<chr> <chr> <dbl>

1 accuracy binary 0.800A sample methods section based on these analysis can be written as follows:

Measures

Outcome variable. Graduation performance was operationalized as a binary variable (good_grad_rate), coded 1 if an institution’s overall graduation rate exceeded 62% and 0 otherwise. The threshold of 62% corresponds to the approximate median of the sample distribution and follow s the operationalization used by Zong and Davis (2022). 14 15 Predictor variables. Five institutional characteristics were included: (1) total student enrollment, (2) percent of applicants admitted, ( 3) tuition and fees for the 2021–22 academic year, (4) percent of full- time, first-time undergraduates receiving any form of financial aid, an d (5) average instructional staff salary equated to nine months of full -time employment. Variable names and definitions follow the IPEDS data dictionary (NCES, 2022).

Analytical Approach

Two complementary analytic strategies addressed the study’s research questions. To examine which institutional factors are associated with high graduation rates (RQ A), we estimated a binary logistic regression model using the glm() function in R with a binomial link function. This approach yields coefficient estimates and significance tests for each predictor, supporting interpretation of the direction and magnitude of associations (Hosmer et al., 2013).

To evaluate the predictive accuracy of the same institutional features (RQ B), we fit an equivalent logistic regression model within a supervised machine learning workflow using the tidymodels framework (Kuhn & Wickham, 2020). This pipeline integrated preprocessing via the recipes package and model specification via parsnip, producing a unified, reproducible workflow consistent with current best practices in applied machine learning (Silge & Kuhn, 2022). Model performance was evaluated using overall classification accuracy on the full dataset. All analyses were conducted in R (version 4.3.x).

6.3.4 Results and Discussion

Logistic Regression Model (RQ A)

The logistic regression model predicted whether an institution achieved a high graduation rate (graduation rate > 62%) from five institutional features. All predictors were statistically significant.

Total enrollment was positively associated with high graduation rates (b = 3.35e-05, z = 4.25, p < .001), indicating that larger institutions were more likely to exceed the graduation rate threshold. Admission selectivity (percent admitted) was negatively associated with graduation outcomes (b = -0.014, z = -4.00, p < .001), consistent with prior evidence that more selective institutions tend to enroll students with stronger prior academic preparation (Zong & Davis, 2022). Tuition and fees was the strongest predictor in the model (b = 6.95e-05, z = 14.00, p < .001); institutions charging higher tuition were substantially more likely to achieve high graduation rates, a pattern that likely reflects broader institutional resource capacity rather than tuition level per se. The proportion of students receiving financial aid was negatively associated with graduation rates (b = -0.030, z = -5.24, p < .001), consistent with research documenting that institutions serving higher proportions of financially vulnerable students face structural barriers to degree completion (Perna, 2010). Average instructional staff salary was positively associated with high graduation rates (b = 3.00e-05, z = 7.74, p < .001), suggesting that faculty compensation, as a proxy for instructional quality and institutional investment, contributes to student success outcomes.

The full model showed substantial improvement over the null model (null deviance = 2277, df = 1706; residual deviance = 1632, df = 1701; AIC = 1644), indicating that the institutional features collectively accounted for meaningful variance in graduation performance.

Supervised ML Workflow Results (RQ B)

The logistic regression model fitted within the tidymodels supervised machine learning workflow achieved an overall classification accuracy of 80.02%. Based on five institutional predictors, the model correctly classified four out of five institutions as high- or low-performing on graduation rates, indicating that these features carry consistent and meaningful predictive signal with respect to graduation outcomes.

Overall Discussion

- Similarities between Approaches:

- Both the traditional logistic regression and the tidymodels workflow identified key predictors that influence graduation rates, such as total enrollment, admission percentage, tuition fees, financial aid percentage, and average staff salary.

- Each approach provides valuable insights: the regression model offers detailed coefficient estimates and significance levels, while the tidymodels workflow emphasizes predictive accuracy.

- Differences between Approaches:

- Interpretability vs. Predictive Performance: The logistic regression output delivers interpretability through its coefficients and p-values, allowing us to understand the direction and magnitude of the relationships. In contrast, the supervised ML workflow focuses on achieving a robust predictive performance, evidenced by an 80% accuracy.

- Handling of Data: The traditional regression model summarizes the relationship between variables, whereas the ML workflow integrates data pre-processing, modeling, and validation into a cohesive framework.

In summary, our analyses indicate that institutional factors, particularly tuition fees and staff salaries, play a significant role in predicting graduation outcomes. The supervised ML approach, with an accuracy of around 80%, confirms the model’s practical utility in classifying institutions based on graduation performance. Both methods complement each other, providing a comprehensive understanding of the underlying dynamics that drive graduation rates in higher education.

6.4 Random Forests & Learning Analytics

In this section, we explore a more sophisticated supervised learning approach—Random Forests—to model student interaction data from the Open University Learning Analytics Dataset (OULAD).

6.4.1 Purpose and Case

Purpose

Random Forests is an ensemble learning method that builds multiple decision trees and aggregates their results to improve prediction accuracy and control overfitting. It is particularly well-suited for complex, high-dimensional data such as student interaction (clickstream) data.

Case Study: Predicting Student Success with VLE Data

Inspired by research on digital trace data, this case study uses interactions data from OULAD. We focus on predicting whether a student will pass a course based on engineered features derived from clickstream data, such as total clicks, mean clicks, and linear trends over time.

6.4.2 Sample Research Questions

- RQ1: How accurately can a random forest model predict whether a student will pass a course using interaction data from OULAD?

- RQ2: Which interaction-based features (e.g., total clicks, clickstream slope) are most important in predicting student outcomes?

- RQ3: How does the use of cross-validation influence the stability and generalizability of the model?

6.4.3 Analysis

Step 1: Load Required Packages

# Load necessary libraries for data manipulation and modeling

library(tidyverse) # Data wrangling and visualization

library(janitor) # Cleaning variable names

library(tidymodels) # Modeling workflow

library(ranger) # Random forest implementation

library(vip) # Variable importance plotsStep 2: Load and Prepare Data

We load and join multiple OULAD files to create a complete modeling dataset.

# Load the interactions data and student performance data

interactions <- read_csv("data/oulad-interactions-filtered.csv")

students_and_assessments <- read_csv("data/oulad-students-and-assessments.csv")

assessments <- read_csv("data/oulad-assessments.csv")

# Create cutoff dates based on first 25% of assessment submissions

code_module_dates <- assessments |>

group_by(code_module, code_presentation) |>

summarize(quantile_cutoff_date = quantile(date_submitted, probs = 0.25, na.rm = TRUE), .groups = 'drop')

# Filter interactions to include only those before the cutoff

interactions_filtered <- interactions |>

left_join(code_module_dates, by = c("code_module", "code_presentation"), suffix = c(".x", "")) |>

filter(date < quantile_cutoff_date) |>

select(-any_of("quantile_cutoff_date.x"))

# Feature Engineering: Summary statistics of clicks

interactions_summarized <- interactions_filtered |>

group_by(id_student, code_module, code_presentation) |>

summarize(

sum_clicks = sum(sum_click, na.rm = TRUE),

sd_clicks = sd(sum_click, na.rm = TRUE),

mean_clicks = mean(sum_click, na.rm = TRUE),

.groups = "drop"

)

# Feature Engineering: Clickstream slopes (trends)

fit_slope <- function(df) {

if(nrow(df) < 2) return(tibble(term = c("(Intercept)", "date"), estimate = c(NA, NA)))

model <- lm(sum_click ~ date, data = df)

broom::tidy(model) |> select(term, estimate)

}

interactions_slopes <- interactions_filtered |>

group_by(id_student, code_module, code_presentation) |>

nest() |>

mutate(model = map(data, fit_slope)) |>

select(-data) |>

unnest(model) |>

pivot_wider(names_from = term, values_from = estimate) |>

rename(intercept = `(Intercept)`, slope = date) |>

mutate(across(where(is.numeric), \(x) round(x, 4)))

# Join all features

students_full <- students_and_assessments |>

left_join(interactions_summarized, by = c("id_student", "code_module", "code_presentation")) |>

left_join(interactions_slopes, by = c("id_student", "code_module", "code_presentation")) |>

mutate(pass = as.factor(pass))Step 3: Define Model Recipe

my_rec2 <- recipe(pass ~ disability + date_registration + gender + code_module +

mean_weighted_score + sum_clicks + sd_clicks + mean_clicks +

intercept + slope,

data = students_full) |>

step_dummy(all_nominal_predictors()) |>

step_impute_knn(all_predictors()) |>

step_normalize(all_numeric_predictors())Step 4: Specify Model and Workflow

# Specify random forest model with impurity-based importance

my_mod2 <- rand_forest() |>

set_engine("ranger", importance = "impurity") |>

set_mode("classification")

# Create workflow

my_wf2 <- workflow() |>

add_recipe(my_rec2) |>

add_model(my_mod2)Step 5: Resampling and Model Fitting

set.seed(20230712)

vfcv <- vfold_cv(data = students_full, v = 4, strata = pass)

class_metrics <- metric_set(accuracy, sensitivity, specificity, ppv, npv, kap)

# Fit using cross-validation

fitted_resamples <- fit_resamples(my_wf2, resamples = vfcv, metrics = class_metrics)

collect_metrics(fitted_resamples)# A tibble: 6 × 6

.metric .estimator mean n std_err .config

<chr> <chr> <dbl> <int> <dbl> <chr>

1 accuracy binary 0.672 4 0.00153 Preprocessor1_Model1

2 kap binary 0.265 4 0.00475 Preprocessor1_Model1

3 npv binary 0.592 4 0.00171 Preprocessor1_Model1

4 ppv binary 0.703 4 0.00205 Preprocessor1_Model1

5 sensitivity binary 0.817 4 0.00188 Preprocessor1_Model1

6 specificity binary 0.434 4 0.00667 Preprocessor1_Model1Step 6: Final Evaluation

set.seed(20230712)

train_test_split <- initial_split(students_full, prop = 0.67, strata = pass)

# Final fit on training, evaluate on test

final_fit <- last_fit(my_wf2, train_test_split, metrics = class_metrics)

collect_metrics(final_fit)# A tibble: 6 × 4

.metric .estimator .estimate .config

<chr> <chr> <dbl> <chr>

1 accuracy binary 0.665 Preprocessor1_Model1

2 sensitivity binary 0.820 Preprocessor1_Model1

3 specificity binary 0.411 Preprocessor1_Model1

4 ppv binary 0.695 Preprocessor1_Model1

5 npv binary 0.582 Preprocessor1_Model1

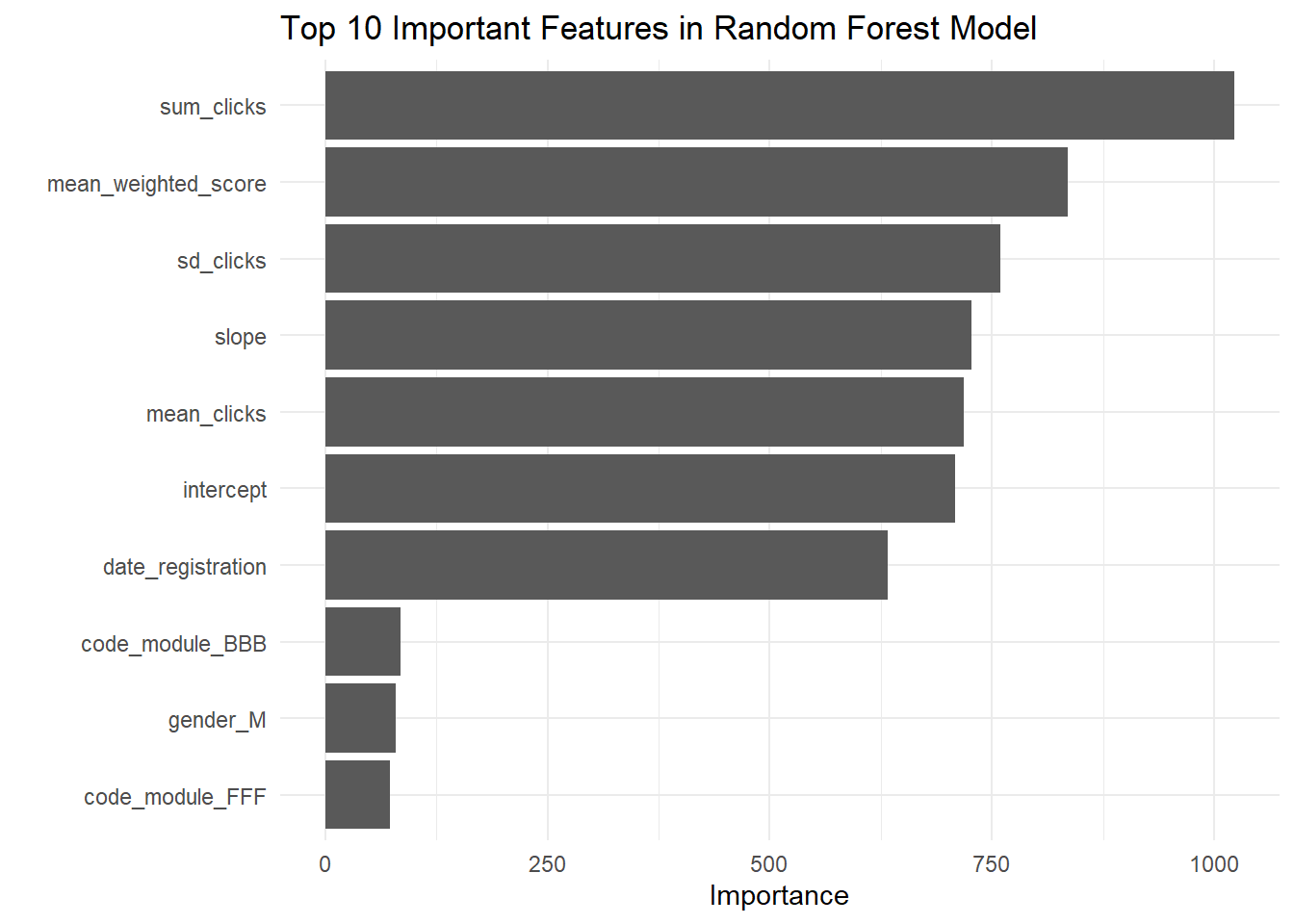

6 kap binary 0.245 Preprocessor1_Model1# Variable Importance Plot

final_fit |>

extract_workflow() |>

extract_fit_parsnip() |>

vip(num_features = 10) +

theme_minimal() +

labs(title = "Top 10 Important Features in Random Forest Model")

A sample methods section based on these steps can be written as follows:

Methods

Data Source

Data were drawn from the Open University Learning Analytics Dataset (OULAD; Kuzilek et al., 2017), a publicly available dataset containing demographic information, virtual learning environment (VLE) interaction logs, and assessment records for students enrolled in Open University courses across multiple module presentations. OULAD has been widely used in learning analytics research to model student engagement and predict academic outcomes (e.g., Hlosta et al., 2017; Mubarak et al., 2020).

Outcome Variable

The outcome variable was a binary indicator of course result, coded as pass (1) or non-pass (0), derived from the student registration and outcome data provided in OULAD.

Feature Engineering

To simulate an early-warning scenario, VLE interaction data were restricted to the period prior to the 25th percentile of assessment submission dates within each module-presentation combination. From this temporally constrained interaction window, three summary features were computed for each student: total clicks (

sum_clicks), mean clicks per session (mean_clicks), and standard deviation of clicks (sd_clicks). In addition, a linear regression was fitted to each student’s click sequence over time, yielding an intercept and slope that capture the baseline level and temporal trend of engagement, respectively. These engineered features were joined with student demographic variables (disability status, gender, registration date, and course module) and a measure of prior academic performance (mean weighted assessment score).Preprocessing

All nominal predictors were dummy-coded. Missing values were imputed using k-nearest neighbors (KNN) imputation. All numeric predictors were normalized to a mean of zero and standard deviation of one. Preprocessing was implemented via the

recipespackage within thetidymodelsframework (Kuhn & Wickham, 2020).Model Specification and Evaluation

A random forest classifier was fit using the

rangerengine with impurity-based variable importance (Wright & Ziegler, 2017). Model performance was assessed using two complementary evaluation strategies. First, 4-fold cross-validation stratified by the outcome variable was used to estimate out-of-sample performance across multiple data partitions. Second, a held-out test set (33% of the sample, stratified by outcome) was used for final model evaluation. Performance was quantified using accuracy, sensitivity, specificity, positive predictive value (PPV), negative predictive value (NPV), and Cohen’s Kappa. Variable importance was extracted from the final fitted model and visualized for the ten most influential predictors.

6.4.4 Results and Discussion

Research Question 1 (RQ1):

How accurately can a random forest model predict whether a student will pass a course using interactions data from OULAD?

Response:

Using 4-fold cross-validation, our random forest model yielded an average accuracy of approximately 67.0% (mean accuracy from resamples: 0.670) with a Cohen’s Kappa of 0.261, suggesting moderate agreement beyond chance. When fitted on the entire training set and evaluated on the test set, the final model showed an accuracy of 66.5% along with: - Sensitivity: 81.5% – indicating the model correctly identifies a high proportion of students who pass. - Specificity: 41.9% – suggesting that the model is less effective at correctly identifying students who do not pass. - Positive Predictive Value (PPV): 69.7% - Negative Predictive Value (NPV): 58.0%

The confusion matrix shows: - True Negatives (TN): 5439 - False Negatives (FN): 1238 - False Positives (FP): 2370 - True Positives (TP): 1710

Overall, these metrics indicate that while the model performs well in detecting positive outcomes (high sensitivity), its lower specificity means that it tends to misclassify a relatively higher proportion of non-passing students. In plain English, our model is an optimist—it is great at spotting the winners, but it sometimes overlooks the stragglers. This is a crucial insight for educators: this tool is better used as an early warning system for success rather than a perfect detector of failure.

Research Question 2 (RQ2):

Which interaction-based features are most important in predicting student outcomes?

Response:

The variable importance analysis, extracted from the final random forest model using the vip() function, highlights the following key predictors (with their respective importance scores):

- sum_clicks: 1062.49 – This is the most influential feature, indicating that the total number of clicks (i.e., student engagement) in the VLE is a strong predictor of student success. In other words, “showing up” digitally matters just as much as showing up in person.

- mean_weighted_score: 838.06 – Reflecting academic performance as measured by weighted assessment scores. Past performance is usually a strong predictor of future success, and this confirms it.

- mean_clicks: 737.79, slope: 739.86, and intercept: 729.52 – These engineered features representing the central tendency and trend of click behavior further underline the importance of digital engagement patterns.

- date_registration: 652.15 – The registration date also plays a significant role.

- Other categorical variables (e.g., dummy-coded

disability,gender, andcode_modulelevels) generally show lower importance scores, with values typically under 80, indicating that while they do contribute, engagement and performance metrics dominate.

These results suggest that both the intensity and the temporal trend of student interactions with the learning environment are critical in predicting whether a student will pass. Specifically, the slope (trend) is a fascinating finding—it tells us that it is not just how much a student clicks, but how their behavior changes over time. A student whose engagement is increasing—even if they started slow—is often in a better position than one whose activity is tapering off.

Research Question 3 (RQ3):

How does the use of cross-validation impact the stability and generalizability of the random forest model on interactions data?

Response:

The use of 4-fold cross-validation (via vfold_cv) allowed us to assess the model’s performance across multiple subsets of the data, mitigating the risk of overfitting. The resampling results are relatively consistent (with accuracy around 67%, sensitivity at 81.6%, and specificity around 43.2%), which supports the model’s robustness and generalizability. Although the final test set performance (accuracy of 66.5%) is slightly lower, the overall consistency of metrics across folds indicates that our model is stable when applied to unseen data. This consistency is key—it suggests that the patterns we have discovered are not just a fluke; they likely hold true for other student populations beyond this dataset.

Overall Discussion

The random forest model built on interactions data from OULAD demonstrates decent predictive performance with an accuracy of approximately 66.5–67% and high sensitivity (around 81.5%), indicating strong capability in identifying students who will pass the course. However, the relatively low specificity (around 42%) suggests that there is room for improvement in correctly classifying students who are at risk of not passing.

The variable importance analysis underscores that engagement-related features—especially sum_clicks and features capturing the trend in interactions (slope, mean_clicks)—are the most influential predictors. This insight implies that the digital footprint of student engagement in the virtual learning environment is critical for predicting academic outcomes. Every click tells a story; ignoring them means missing valuable signals.

In summary, while our model performs robustly across cross-validation folds and provides actionable insights into key predictive features, the lower specificity points to the need for further refinement. Future work might explore additional feature engineering, alternative model tuning, or combining models to better balance sensitivity and specificity, ultimately supporting timely interventions in educational settings.

6.5 Summary

This chapter introduced machine learning as a tool for educational research through two case studies using the Open University Learning Analytics Dataset. Logistic regression provided an interpretable baseline for predicting student outcomes, while random forests captured more complex interaction patterns at the cost of interpretability. Both approaches demonstrated that student engagement metrics — particularly click activity and its trajectory over time — are strong predictors of course success. These methods extend the analytical toolkit introduced in earlier chapters by moving from descriptive and relational analyses toward predictive modeling.