# Install and load the igraph package

# install.packages("igraph")

library(igraph)

# Example: Load an edge list from CSV

edge_list <- read.csv("data/friendship_edges.csv")

# Create the graph object (directed network)

g <- graph_from_data_frame(edge_list, directed = TRUE)

# Plot the network

plot(g, main = "Friendship Network")Network Data

5.1 Overview

Social network analysis (SNA) is the process of investigating social structures through the use of networks and graph theory (Borgatti et al., 2013; Wasserman & Faust, 1994). It maps and measures relationships and flows between people, groups, organizations, computers, or other information/knowledge processing entities — capturing not just individual attributes but the connections between them. In educational research, SNA has been applied to understand collaboration structures, examine the spread of information and influence, and evaluate the effects of instructional interventions on learner interactions (Carolan, 2014).

These applications range from analyzing face-to-face classroom dynamics to large-scale online learning environments. For example, Kellogg & Edelmann (2015) examined discussion networks in a massively open online course, and Rosenberg & Staudt Willet (2021) explored how social influence models can be advanced within learning analytics contexts. Used this way, SNA can identify patterns and trends in how networks form and operate, predict future behavior, and inform the design of interventions that improve learning environments.

Portions of this chapter adapt instructional and research resources developed through the NSF-supported Learning Analytics in STEM Education Research (LASER) Institute. See the Preface for the full acknowledgment and disclaimer.

5.2 Accessing SNA Data

Social Network Analysis (SNA) relies on relational data—information about connections (edges) between entities (nodes) such as students, teachers, or organizations. Compared to traditional survey or tabular data, SNA requires pairwise relational information. In education, this could include “who collaborates with whom,” “who talks to whom,” or digital traces of discussion and collaboration in online platforms.

5.2.1 Types and Sources of SNA Data

There are several common sources and structures for SNA data in educational research contexts:

- Survey-based Network Data: Collected via roster or name generator questions, e.g., “List the classmates you discuss assignments with.”

- Behavioral/Observational Data: Derived from logs of actual interactions, e.g., forum replies, emails, classroom seating.

- Archival or Digital Trace Data: Extracted from digital platforms such as MOOCs, LMS discussion forums, Slack, Twitter, or Facebook.

- Administrative/Organizational Data: Information about formal structures such as team membership or co-authorship.

Data Structure: Most SNA data are formatted as: - Edge List (two columns: source and target) - Adjacency Matrix (rows and columns are actors; cell values indicate a tie) - Node Attributes (supplementary information about each node, e.g., gender, role)

5.2.2 Example 1: Creating a Simple Network from an Edge List

Below is an example of constructing a network from a simple CSV edge list. This mirrors typical classroom survey data (“who do you consider your friend in this class?”).

5.2.3 Example 2: Generating Network Data from Digital Traces

Many educational datasets now come from online discussion forums, MOOCs, or LMS systems. For example, the MOOC case study (Kellogg & Edelmann, 2015) uses reply relationships in online courses to construct discussion networks.

# Suppose you have a data frame with columns: from_user, to_user

mooc_edges <- read.csv("data/mooc_discussion_edges.csv")

g_mooc <- graph_from_data_frame(mooc_edges, directed = TRUE)

plot(g_mooc, main = "MOOC Discussion Network")5.2.4 Example 3: Collecting SNA Data via Surveys

If you want to collect your own network data:

Ask participants to name or select (from a roster) their friends, collaborators, or contacts.

Compile responses into an edge list.

Example survey prompt:

“Please list up to five classmates you seek help from most frequently.”

Survey-based SNA is easier to manage with small to medium groups. For larger networks, digital trace or archival data may be more practical.

5.2.5 Node Attribute Data

You can also load additional data about each node (student, teacher, etc.) to enable richer analyses (e.g., centrality by gender or role).

node_attributes <- read.csv("data/friendship_nodes.csv")

# Add attributes to igraph object

V(g)$gender <- node_attributes$gender[match(V(g)$name, node_attributes$name)]5.2.6 Further Examples

- Public Datasets:

- Synthetic Data:

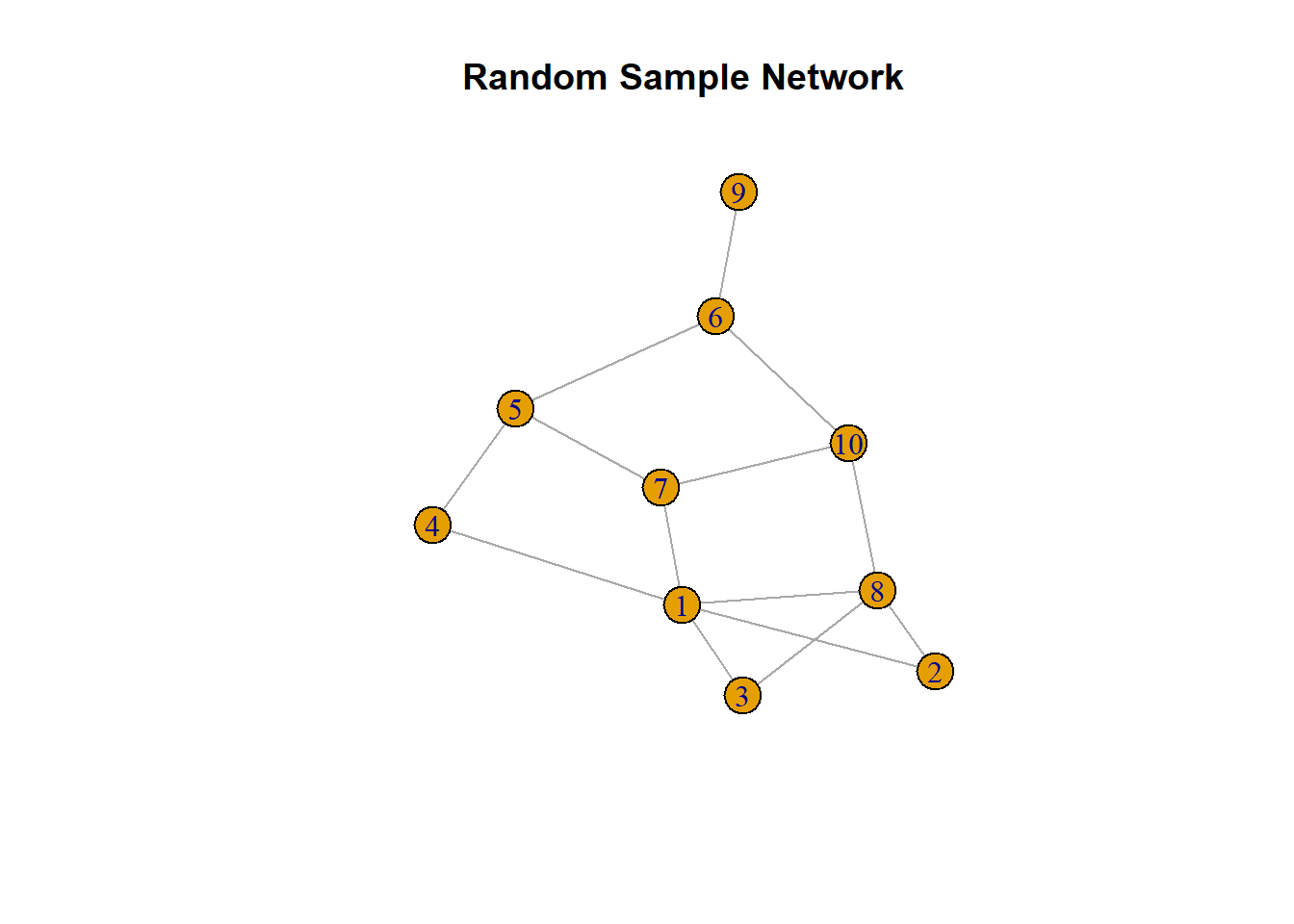

R’s

igraphpackage can also generate sample networks for practice:library(igraph) g_sample <- sample_gnp(n = 10, p = 0.3) plot(g_sample, main = "Random Sample Network")

5.2.7 Best Practices and Tips

- Ethics: Social network data can be sensitive. Protect anonymity and comply with IRB/data use guidelines.

- Format Consistency: Always clarify whether ties are directed/undirected, binary/weighted, and ensure consistent formatting.

- Missing Data: Especially in survey-based SNA, missing responses can impact network structure and interpretation.

5.2.8 Summary

Accessing SNA data involves both careful design (in the case of surveys/observations) and extraction/wrangling (in the case of digital traces or archival records). The choice of data source and structure will directly influence the kinds of questions you can answer with SNA.

- Borgatti, S. P., Everett, M. G., & Johnson, J. C. (2018). Analyzing Social Networks (2nd ed). SAGE.

- Kellogg, S., & Edelmann, A. (2015). Massive open online course discussion forums as networks.

5.4 Case Study: Hashtag Common Core

5.4.1 Purpose and Case

The purpose of this case study is to demonstrate the application of social network analysis (SNA) in a real-world policy context: the heated national debate over the Common Core State Standards (CCSS) as it played out on Twitter. Drawing on the work of Supovitz, Daly, del Fresno, and Kolouch, the #COMMONCORE Project provides a vivid example of how social media-enabled networks shape educational discourse and policy.

This case focuses on: - Identifying key actors (“transmitters,” “transceivers,” and “transcenders”) and measuring their influence. - Detecting subgroups/factions within the conversation. - Exploring how sentiment about the Common Core varies across network positions. - Demonstrating network wrangling, visualization, and analysis using real tweet data.

Data Source

Data was collected from Twitter’s public API using keywords/hashtags related to the Common Core (e.g., #commoncore, ccss, stopcommoncore). The dataset includes user names, tweets, mentions, retweets, and relevant timestamps from a sample week. Only public tweets are included, and user privacy is respected.

5.4.2 Sample Research Questions

- RQ1: Who are the “transmitters,” “transceivers,” and “transcenders” in the Common Core Twitter network?

- RQ2: What subgroups or factions exist within the network, and how are they structured?

- RQ3: How does sentiment about the Common Core vary across actors and subgroups?

- RQ4: What other patterns of communication (e.g., centrality, clique formation, isolates) characterize this network?

5.4.3 Analysis

Step 1: Load Required Packages

library(tidyverse)

library(tidygraph)

library(ggraph)

library(skimr)

library(igraph)

library(tidytext)

library(vader)Step 2: Data Import and Wrangling

# Import tweet data (edgelist format: sender, receiver, timestamp, text)

ccss_tweets <- read_csv("data/ccss-tweets.csv")

# Prepare the edgelist (extract sender, mentioned users, and tweet text)

ties_1 <- ccss_tweets |>

relocate(sender = screen_name, target = mentions_screen_name) |>

select(sender, target, created_at, text)

# Unnest receiver to handle multiple mentions per tweet

ties_2 <- ties_1 |>

unnest_tokens(input = target,

output = receiver,

to_lower = FALSE) |>

relocate(sender, receiver)

# Remove tweets without mentions to focus on direct connections

ties <- ties_2 |>

drop_na(receiver)

# Build nodelist

actors <- ties |>

select(sender, receiver) |>

pivot_longer(cols = c(sender, receiver), names_to = "role", values_to = "actors") |>

select(actors) |>

distinct()

# Create Network Object

ccss_network <- tbl_graph(edges = ties,

nodes = actors,

directed = TRUE)

ccss_network# A tbl_graph: 46 nodes and 42 edges

#

# A directed multigraph with 14 components

#

# Node Data: 46 × 1 (active)

actors

<chr>

1 DistanceLrnBot

2 k12movieguides

3 WEquilSchool

4 JoeWEquil

5 SumayLu

6 fluttbot

7 BodShameless

8 Math

9 ozsultan

10 sfchronicle

# ℹ 36 more rows

#

# Edge Data: 42 × 4

from to created_at text

<int> <int> <dttm> <chr>

1 1 2 2021-06-28 09:53:54 "#Luca Movie Guide | Worksheet | Questions | …

2 3 4 2021-06-28 02:32:59 "Why public schools should focus more on buil…

3 3 3 2021-06-28 02:32:59 "Why public schools should focus more on buil…

# ℹ 39 more rowsStep 4: Network Structure – Components, Cliques, and Communities

- Components Identify weak and strong components (connected subgroups):

ccss_network <- ccss_network |>

activate(nodes) |>

mutate(weak_component = group_components(type = "weak"),

strong_component = group_components(type = "strong"))

# View component sizes

ccss_network |>

as_tibble() |>

group_by(weak_component) |>

summarise(size = n()) |>

arrange(desc(size))# A tibble: 14 × 2

weak_component size

<int> <int>

1 1 14

2 2 6

3 3 4

4 4 3

5 5 3

6 6 2

7 7 2

8 8 2

9 9 2

10 10 2

11 11 2

12 12 2

13 13 1

14 14 1- Cliques Identify fully connected subgroups (if any):

clique_num(ccss_network)[1] 4cliques(ccss_network, min = 3)[[1]]

+ 3/46 vertices, from 2eb16ca:

[1] 4 5 6

[[2]]

+ 3/46 vertices, from 2eb16ca:

[1] 39 40 41

[[3]]

+ 3/46 vertices, from 2eb16ca:

[1] 3 4 6

[[4]]

+ 4/46 vertices, from 2eb16ca:

[1] 3 4 5 6

[[5]]

+ 3/46 vertices, from 2eb16ca:

[1] 3 4 5

[[6]]

+ 3/46 vertices, from 2eb16ca:

[1] 3 5 6- Communities Detect densely connected communities using edge betweenness:

ccss_network <- ccss_network |>

morph(to_undirected) |>

activate(nodes) |>

mutate(sub_group = group_edge_betweenness()) |>

unmorph()

ccss_network |>

as_tibble() |>

group_by(sub_group) |>

summarise(size = n()) |>

arrange(desc(size))# A tibble: 16 × 2

sub_group size

<int> <int>

1 1 10

2 2 6

3 3 4

4 4 3

5 5 3

6 6 2

7 7 2

8 8 2

9 9 2

10 10 2

11 11 2

12 12 2

13 13 2

14 14 2

15 15 1

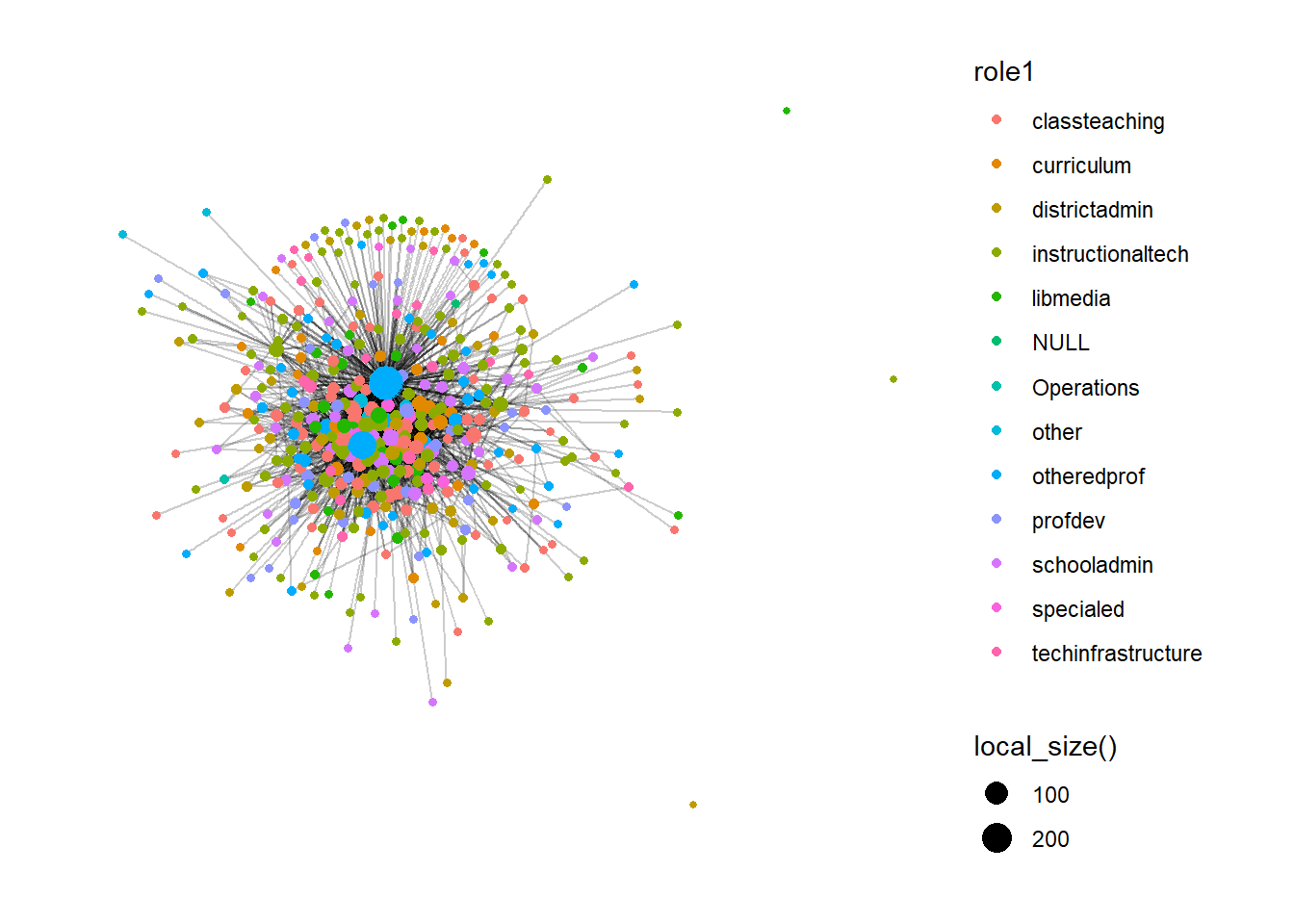

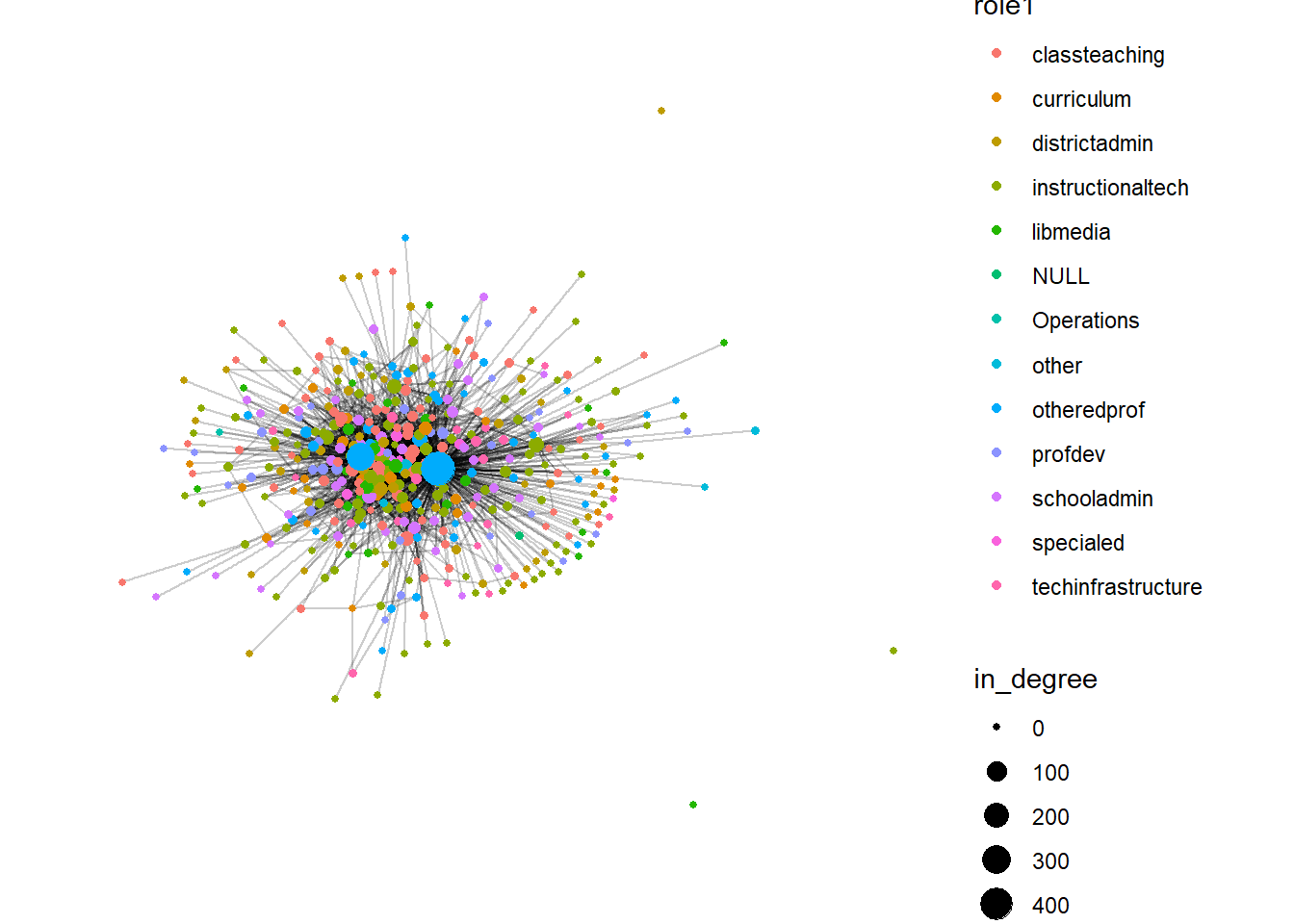

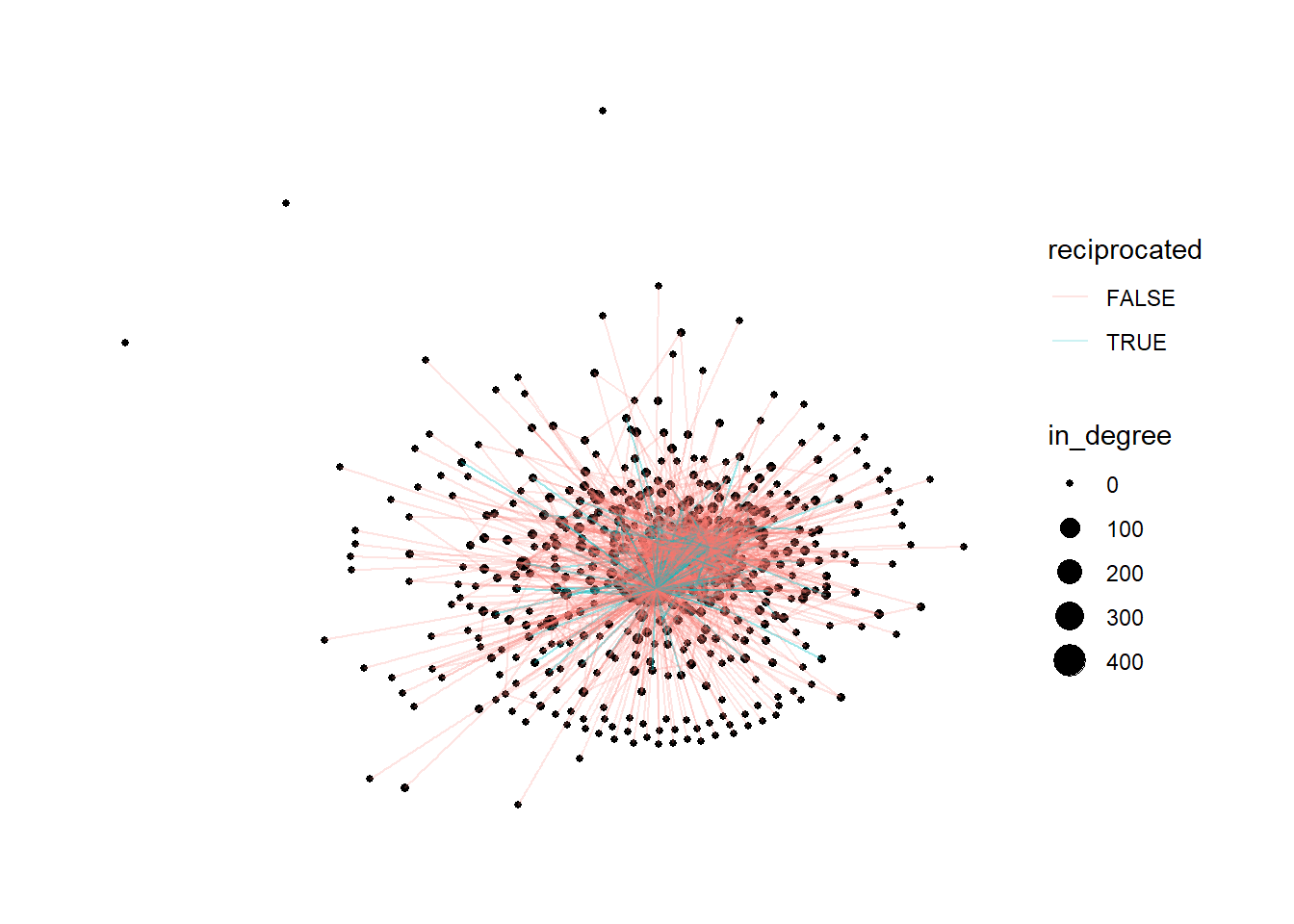

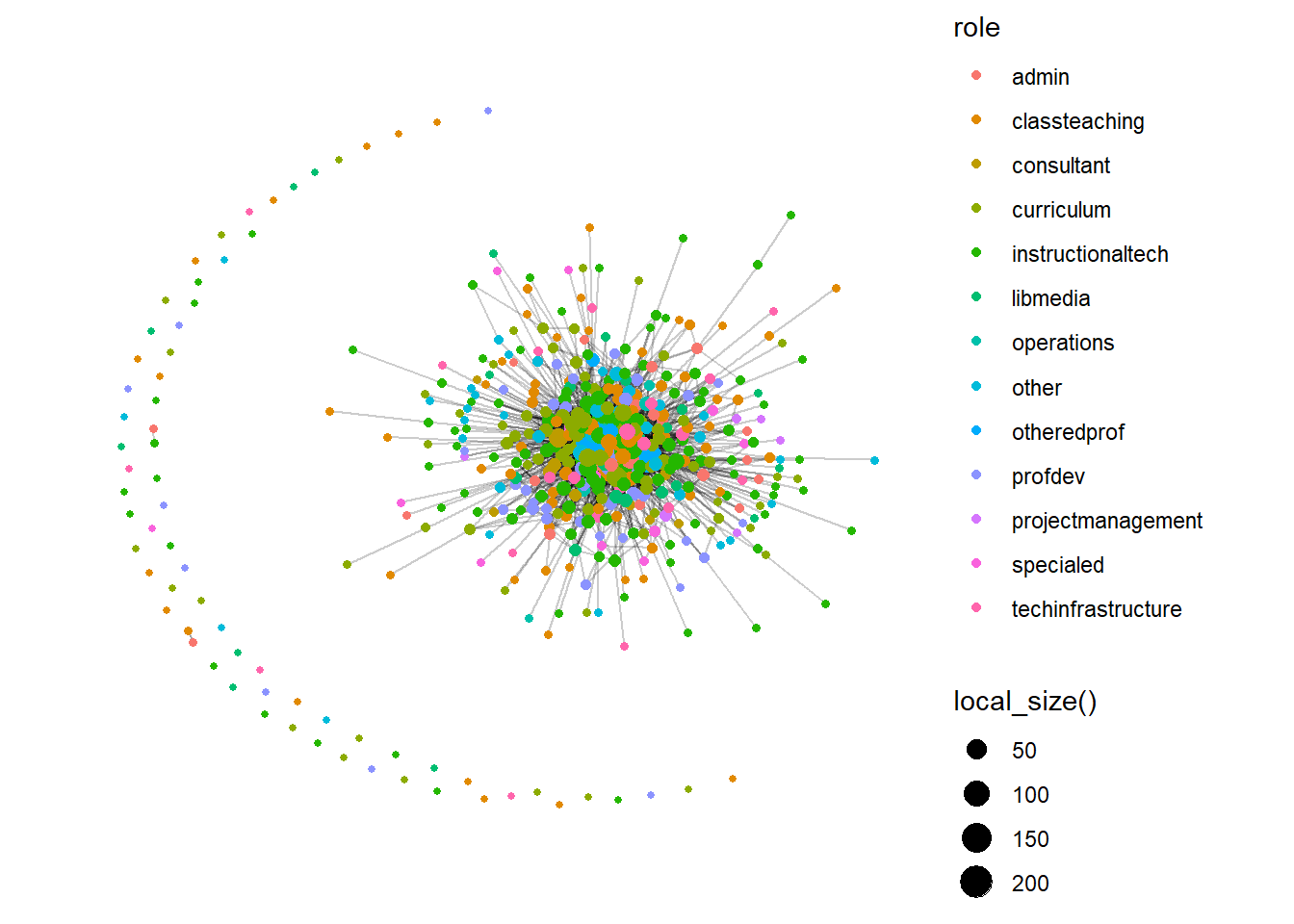

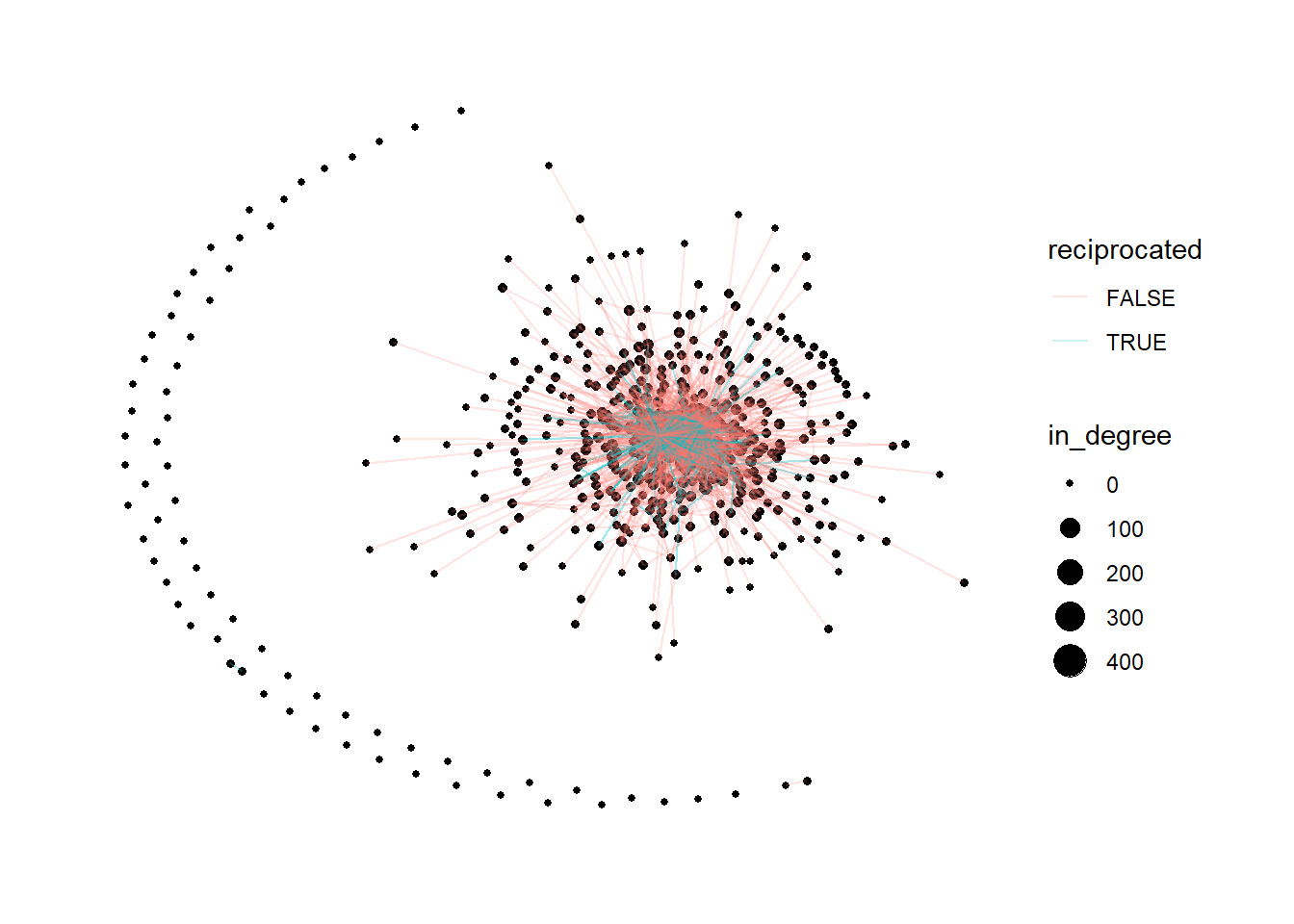

16 16 1Step 5: Egocentric Analysis – Centrality & Key Actors

ccss_network <- ccss_network |>

activate(nodes) |>

mutate(

size = local_size(),

in_degree = centrality_degree(mode = "in"),

out_degree = centrality_degree(mode = "out"),

closeness = centrality_closeness(),

betweenness = centrality_betweenness()

)

# Identify top actors by out_degree (transmitters), in_degree (transceivers), and both (transcenders)

top_transmitters <- ccss_network |> as_tibble() |> arrange(desc(out_degree)) |> head(5)

top_transceivers <- ccss_network |> as_tibble() |> arrange(desc(in_degree)) |> head(5)

# Transcenders: high in-degree AND out-degree (using top 10% threshold)

top_transcenders <- ccss_network |> as_tibble() |>

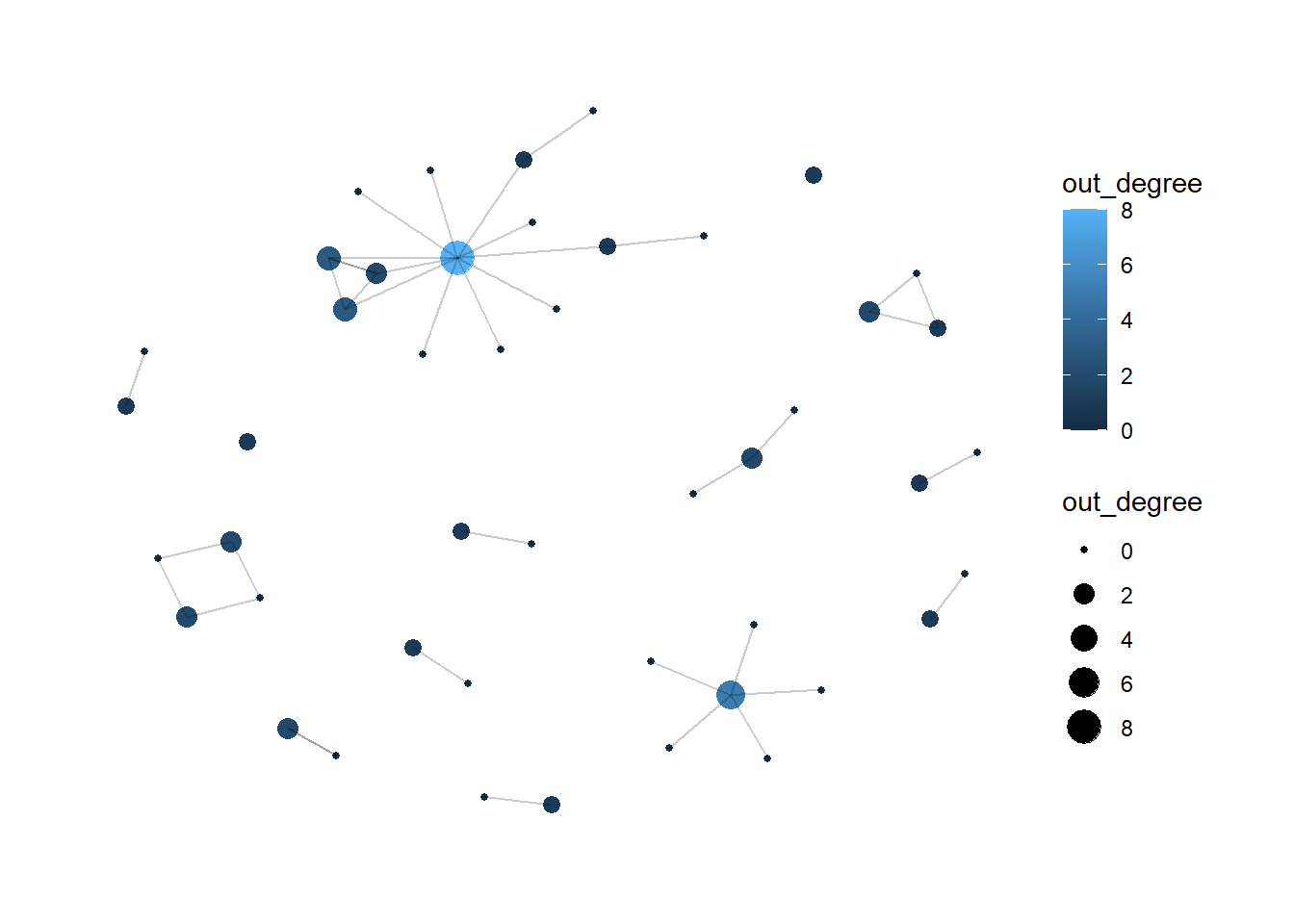

filter(out_degree > quantile(out_degree, 0.9) & in_degree > quantile(in_degree, 0.9))Step 6: Visualize the Network

ggraph(ccss_network, layout = "fr") +

geom_node_point(aes(size = out_degree, color = out_degree)) +

geom_edge_link(alpha = .2) +

theme_graph() +

theme(text = element_text(family = "sans"))

Step 7: Sentiment Analysis (Optional)

library(vader)

vader_ccss <- vader_df(ccss_tweets$text)

mean(vader_ccss$compound)

vader_ccss_summary <- vader_ccss |>

mutate(sentiment = case_when(

compound >= 0.05 ~ "positive",

compound <= -0.05 ~ "negative",

TRUE ~ "neutral"

)) |>

count(sentiment)A sample methods section can be written for the steps above as follows:

Methods

Twitter data related to CCSS were imported from a CSV file containing sender, mentioned user(s), timestamp, and tweet text. The data were reshaped into a directed edge list, with the tweeting account treated as the sender and mentioned accounts treated as receivers. Tweets containing multiple mentions were separated so that each mention represented an individual directed tie, and tweets without mentions were excluded. A node list of unique actors was then constructed, and the resulting sender–receiver data were used to build a directed network.

Network structure was examined by identifying weak and strong components, cliques of three or more actors, and communities using edge-betweenness clustering on an undirected version of the network. To assess actor prominence, in-degree, out-degree, closeness, and betweenness centrality were calculated at the node level. Actors with the highest out-degree were treated as key transmitters, those with the highest in-degree as key receivers, and actors high on both measures as highly central participants. The network was visualized using a force-directed layout, with node size mapped to out-degree.

An optional sentiment analysis was conducted using VADER to summarize the emotional tone of tweet text. Compound sentiment scores were computed for each tweet and classified as positive, negative, or neutral using standard thresholds.

5.4.4 Results and Discussion

RQ1: Who are the “transmitters,” “transceivers,” and “transcenders” in the Common Core Twitter network?

In the Common Core Twitter network, a small number of actors dominated communication. Transmitters were led by SumayLu, who initiated eight outgoing ties, followed by DouglasHolt with five, WEquilSchool with three, fluttbot with three, and JoeWEquil with two. These accounts were the most active in broadcasting, mentioning, or retweeting others within the network.

The most prominent transceivers were WEquilSchool and SumayLu, each with an in-degree of three, followed by JoeWEquil, Tech4Learni…, and LASER_Insti…, each with an in-degree of two. These actors received the most attention from others and likely served as focal points in the conversation.

Only two users qualified as transcenders, meaning they were both highly active senders and frequent recipients of ties. WEquilSchool had an in-degree of three and an out-degree of three, while SumayLu had an in-degree of three and an out-degree of eight. These bridging actors appear to have played especially important roles in connecting and sustaining the discourse.

RQ2: What subgroups or factions exist in the network?

Component analysis indicates that the Common Core Twitter network was highly fragmented. There were 14 weakly connected components, with the largest containing 14 users, and many additional components consisting of only two or three members. This pattern suggests limited overall cohesion and multiple parallel or isolated conversations rather than one fully integrated discussion space.

Clique analysis identified four fully connected subgroups, each containing three or four actors. These included one four-person clique involving nodes 3, 4, 5, and 6, along with several overlapping three-person cliques. Such structures point to small pockets of tightly knit interaction, although they were rare relative to the overall size of the network.

Community detection using edge betweenness identified 16 subgroups, broadly consistent with the component structure. The largest subgroup contained 10 members, while most of the remaining communities were much smaller. Taken together, these results suggest a network organized around several small, partially isolated clusters rather than a single cohesive conversation.

RQ3: What is the overall sentiment in the network?

VADER sentiment analysis of the tweet content produced an average compound score of 0.09, indicating a slightly positive overall sentiment. Although the broader policy context surrounding the Common Core is often contentious, the sampled tweets were, on balance, more positive than negative.

When classifying individual tweets as positive, neutral, or negative, the distribution showed a mix of all three categories, with positive tweets slightly outnumbering negative ones. This pattern suggests that, at least during this period, the Twitter conversation included advocacy, supportive comments, and constructive dialogue, rather than being dominated by criticism or negativity.

RQ4: What other patterns of communication (e.g., centrality, clique formation, isolates) characterize this network?

The network exhibits a strongly centralized “star-like” structure within its largest component. Two users, SumayLu and WEquilSchool, emerge as central hubs, with high out-degree and in-degree centrality, respectively. The majority of other users have very low degree values, often 0 or 1, indicating that they are peripheral and engaged in few interactions.

Clique analysis identified four fully connected subgroups of three or more users, including one 4-person clique and several 3-person cliques, some of which overlap. However, such tightly knit groups are relatively rare; most communication occurs outside of dense subgroups. The network contains 14 weak components, many of them very small, with several users appearing as isolates or embedded in isolated dyads and triads. These actors are disconnected from the main conversation or only weakly integrated.

Overall, communication in this network is characterized by a small set of highly central users, sparse and fragmented connectivity, and a substantial number of isolates, which together suggest limited network-wide cohesion and a concentration of interaction around a few key accounts.

Discussion

This analysis of the Common Core Twitter conversation reveals a sparse and fragmented network, with debate distributed across many small subgroups and only one moderately sized connected component. Communication is anchored by a small number of key actors who act as broadcasters and focal points, while the majority of users occupy peripheral positions with minimal interaction.

Tightly connected cliques and communities are few and small, reinforcing the absence of broad network-wide cohesion. At the same time, VADER sentiment scores indicate a slightly positive overall tone, suggesting that the sampled conversation includes advocacy, promotional messaging, and constructive dialogue more than unrelenting criticism.

For researchers and practitioners, this pattern highlights the importance of intentionally engaging transcendent actors—those who both send and receive many ties—as potential bridges across subgroups. The network’s fragmentation also implies that broad influence is unlikely to arise from a single central core; instead, effective outreach may require engaging multiple small clusters independently. Combining social network analysis with sentiment analysis provides a richer understanding of the conversation, revealing not only who is communicating but also how the debate is structured and how it feels to participants.

References

Supovitz, J., Daly, A.J., del Fresno, M., & Kolouch, C. (2017). #commoncore Project. Retrieved from http://www.hashtagcommoncore.com

Carolan, B.V. (2014). Social Network Analysis and Education: Theory, Methods & Applications. Sage.

Silge, J., & Robinson, D. (2017). Text Mining with R: A Tidy Approach. O’Reilly.

5.5 Summary

This chapter introduced social network analysis as a method for studying relational structures in educational settings. Through two case studies — a MOOC discussion network and a Twitter hashtag network — we demonstrated how to construct, measure, visualize, and interpret networks using R. For readers interested in exploring these methods further, the following resources are recommended:

Social Network Analysis Fundamentals

Hanneman, R. A., & Riddle, M. (2005). Introduction to Social Network Methods (online textbook).

Wasserman, S., & Faust, K. (1994). Social Network Analysis: Methods and Applications (Chapters 1–8).

Network Analysis in R

“Tidy Network Analysis in R” (Schoch, 2024) – a free, R‑centric guide to

igraph,tidygraph, andggraph.tidygraphandggraphpackage documentation (CRAN and tidyverse‑style vignettes).

Text Mining and Sentiment in R

Silge, J., & Robinson, D. (2017). Text Mining with R: A Tidy Approach (free online book).

VADER documentation and the

vader/vaderRpackage guides for sentiment analysis of short text.

Online Learning and Discussion Networks

- Articles on network analysis of online discussions (e.g., studies of MOOC forum networks or course‑level discussion networks) that connect SNA metrics to pedagogical questions.

Practical R Notebooks / Tutorials

- Online tutorials or GitHub repos titled “Network analysis in R”, “Twitter network analysis”, or “sentiment analysis with tidytext + VADER” to replicate and extend the exact workflow used here.